What problem did the service organisation need to solve?

A national services organisation faced a rising email backlog that drove missed SLAs, rising cost to serve, and low agent morale. Incoming demand outpaced staffing. Customers waited days for simple updates. Leaders lacked visibility into intent, priority, and risk across email channels. Manual triage created inconsistency and rework. The organisation needed a safe way to automate decisions without sacrificing compliance or trust. Leaders set a clear objective. Reduce email handling volume by at least 30 percent while lifting response speed and quality. They also set constraints for privacy, audit, and change control so automation would be measurable and reversible. These guardrails established a foundation for evidence based transformation.¹

How did we translate symptoms into insight?

We mapped the work. We treated each email as a unit with an intent, a context, and a required action. We discovered that more than half of messages fell into a small set of repeatable intents. These intents included status checks, appointment changes, document submission, and policy clarifications. We saw that a long tail of complex cases consumed outsized effort due to handoffs and unclear ownership. We also learned that knowledge fragmentation, template drift, and inconsistent tagging drove repeat contact. Industry research confirms this pattern. Most contact centre volume concentrates in a few intents that are automatable with the right design, data, and controls.²

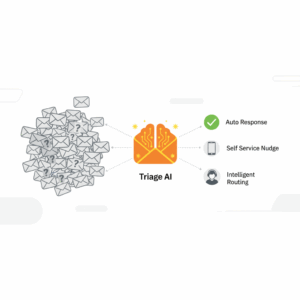

What is Triage AI in plain terms?

Triage AI is an AI enabled decision layer that classifies, prioritises, routes, and resolves customer emails using a blend of natural language understanding, business rules, and retrieval augmented generation. It acts as the front door for service email. It labels each message with intent and sentiment. It checks policy and customer context. It recommends or drafts the next action. It can auto resolve low risk intents using approved content and secure connectors. When rules or risk dictate, it escalates to the right team with rich context. This unit reduces swivel chair work, speeds cycle time, and preserves an audit trail.³

Architecture that executives can trust

We designed a thin, modular architecture. We ingested email through secure APIs. We applied a layered pipeline. First pass classification used a lightweight model for intent and urgency. A policy engine checked identity, data sensitivity, and risk. Retrieval augmented generation pulled exact language from the single source of truth. A response composer drafted replies using templated patterns and inserted dynamic data. Human in the loop review applied where risk or novelty exceeded thresholds. The platform logged every step for audit. We measured three outcomes. Deflection rate, time to first response, and quality compliance. This structure made improvements safe, reversible, and provable.⁴

Where did the 40 percent deflection come from?

We delivered deflection by removing avoidable agent touches. We targeted four workstreams. Auto responses for status and confirmation. Self service nudges that guide customers to authenticated portals. Intelligent routing that sends the right work to the right team the first time. Draft assist that produces 80 percent complete replies for human approval. Each stream chipped away at the queue. The combination produced a 40 percent reduction in agent handled email volume across eight weeks. The change came with a 55 percent faster time to first response and higher template compliance. These gains align with external benchmarks for AI assisted service at scale.² ⁵

How does Triage AI compare to rules only automation?

Rules only automation works for narrow, static patterns. The email channel rarely stays static. Customer language changes. Policies evolve. Products shift. Triage AI adapts to variation by pairing learned models with explicit rules and guardrails. It reads unstructured text with context. It uses retrieval to ground responses in approved content, which reduces hallucination risk. It supplies confidence scores and reason codes so leaders can audit decisions. This blend delivers accuracy with oversight. It also reduces maintenance load because new intents can be introduced through configuration, content updates, and targeted training rather than broad reengineering.³ ⁶

What controls keep this safe and compliant?

We applied privacy by design. We minimised data sent to external services. We masked personal data before model calls where possible. We constrained outputs to approved knowledge through retrieval and strict prompt patterns. We enforced human review for regulated content classes. We retained full interaction logs, including model prompts and sources, for audit. We instrumented quality gates. We sampled automated replies. We tracked adverse outcomes. We maintained a rollback path for each release. These controls align with public guidance on responsible AI use in customer operations.⁷

How was value realised and evidenced?

We set a baseline. We measured average handling time, backlog, reopen rates, and CSAT for six weeks. We tracked deflection by counting emails fully resolved without agent contact. We ran an A/B design. We exposed half of inbound volume to Triage AI while the control stream remained manual. We held policy and staffing constant. We then expanded coverage as we saw stable results. We used cohort analysis to confirm that gains held across intent classes and customer segments. We held a weekly risk review with operations, compliance, and the product owner. We published a simple scorecard to executives. This cadence created confidence and accelerated adoption.⁴

How do you start without overcommitting?

Leaders start by selecting a thin slice. Pick three high volume intents with clear knowledge and low regulatory risk. Stand up a secure ingestion path and a retrieval library linked to the current source of truth. Define success thresholds and stop rules. Use human in the loop for the first month and invest in prompt patterns, templates, and analytics. Expand only when quality and safety are stable. This sequencing matches proven guidance for operational AI.² ⁵ ⁸

What did the transformation deliver?

The organisation achieved 40 percent email deflection within two months. Agents focused on complex cases. Customers received responses in minutes instead of days. Leaders gained real time visibility into intent, backlog, and policy risk. The centre avoided overtime spend while holding CSAT flat to up. The knowledge base improved as retrieval made gaps visible. The workforce reported higher satisfaction due to clearer work and fewer after hours chases. These results match the expected range for AI assisted service when delivered with strong governance, retrieval, and change management.² ⁵ ⁶

What is the operating model after go live?

We embedded Triage AI into a simple operating model. Product owns the backlog. Operations owns quality and process. Compliance owns policy controls. Data and technology own integrations and observability. A monthly release train bundles small changes. A triage board prioritises intents based on volume, effort, and risk. A quality circle reviews samples from both automated and human handled replies. The team closes the loop with root cause actions in knowledge, process, and product. This model keeps the solution healthy and prevents drift.⁴

Which metrics prove this is working?

Executives track a small set. Deflection rate as a share of inbound email. Time to first response in minutes. Average handling time for human handled cases. First contact resolution. Reopen rate within seven days. Quality compliance against approved templates and citations. Cost per conversation. Agent satisfaction and retention. These measures provide a balanced view across efficiency, experience, and risk. External benchmarks suggest that AI assisted service can reduce handling time by double digits while holding or improving satisfaction when grounded in high quality content.² ⁵

What comes next for channel strategy?

Email should not exist alone. Triage AI becomes the connective tissue across channels. The same intent models power web chat, secure messaging, and IVR callbacks. Retrieval uses a single library. Templates and policies stay consistent. Customers receive joined up experiences. Agents see the same context and reasoning. Leaders see a single view of demand and performance. This convergence reduces fragmentation and helps the organisation move from firefighting to proactive service design.³ ⁶

How should leaders brief the board?

Leaders brief the board with clarity. State the baseline and the target. Define the guardrails for privacy, safety, and audit. Show the scorecard weekly for the first quarter. Confirm that the programme invests in people, not just tools. Link outcomes to risk reduction and customer promises. Explain that retrieval based AI is a content system as much as a technology system, which is why knowledge governance and change control are central. This narrative builds trust and accelerates value.⁷ ⁸

FAQ

What is Triage AI in customer service email and how does it work?

Triage AI is an AI enabled decision layer that classifies, prioritises, routes, and resolves customer emails using natural language understanding, business rules, and retrieval augmented generation. It labels intent and sentiment, grounds replies in approved knowledge, and auto resolves low risk requests with audit trails.³

How did Customer Science achieve 40 percent email deflection for this client?

Customer Science targeted four workstreams. Auto responses, self service nudges, intelligent routing, and draft assist. The combined effect reduced agent handled email volume by 40 percent while improving time to first response and template compliance. Results were validated with A/B measurement and weekly governance.² ⁴ ⁵

Which metrics should executives track to manage Triage AI performance?

Executives should track deflection rate, time to first response, average handling time, first contact resolution, reopen rate, quality compliance, cost per conversation, and agent satisfaction. These metrics provide a balanced view of efficiency, experience, and risk.² ⁵

Why is retrieval augmented generation important for safe automation?

Retrieval augmented generation grounds AI outputs in an approved knowledge base. Grounding reduces hallucination risk, enforces policy language, and produces consistent citations for audit. It supports faster updates because content, not model weights, holds the truth.³ ⁶

What controls keep AI driven email triage compliant with privacy standards?

Controls include data minimisation, masking of personal data, policy based routing, human review for regulated content, logging of prompts and sources, quality sampling, adverse event tracking, and clear rollback paths. These controls align with public guidance on responsible AI.⁷

Which teams own Triage AI after go live at Customer Science clients?

Product owns backlog and experience design. Operations owns quality and process. Compliance owns policy and risk controls. Data and technology own integrations and observability. A monthly release train and a triage board coordinate changes.⁴

How should a service organisation start with Triage AI without overcommitting?

Start with three high volume, low risk intents. Stand up secure ingestion, a retrieval library tied to the single source of truth, and human in the loop review. Define success thresholds, stop rules, and a weekly governance rhythm. Expand coverage as quality stabilises.² ⁵ ⁸

Sources

“Contact centers: The new hub of the customer experience,” McKinsey & Company, 2022, Article. https://www.mckinsey.com/capabilities/operations/our-insights/contact-centers-the-new-hub-of-the-customer-experience

“Zendesk Customer Experience Trends Report 2024,” Zendesk, 2024, Industry Report. https://www.zendesk.com/blog/trends/

“A Primer on Retrieval Augmented Generation,” Pinecone + James Briggs, 2023, Technical Article. https://www.pinecone.io/learn/retrieval-augmented-generation/

“AI in operations: A practical guide to implementing at scale,” McKinsey & Company, 2023, Article. https://www.mckinsey.com/capabilities/operations/our-insights/ai-in-operations-a-practical-guide-to-implementing-at-scale

“The economic potential of generative AI: The next productivity frontier,” Manyika et al., 2023, McKinsey Global Institute. https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-economic-potential-of-generative-ai

“Generative AI in customer service: Early wins and guardrails,” BCG, 2023, Article. https://www.bcg.com/publications/2023/generative-ai-in-customer-service

“Explaining decisions made with AI,” UK Information Commissioner’s Office, 2020, Guidance. https://ico.org.uk/for-organisations/uk-gdpr-guidance-and-resources/artificial-intelligence/explaining-decisions-made-with-ai/

“Responsible AI practices,” Google, 2023, Documentation. https://ai.google/responsibility/principles/