What is a hypothesis in service transformation?

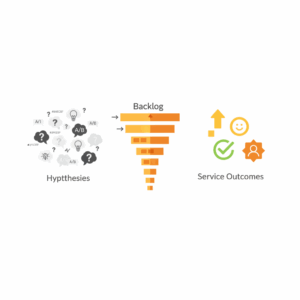

Leaders treat a hypothesis as a clear, testable belief about how a change will improve a customer or operational outcome. A good hypothesis names the user, the problem, the proposed change, and the expected outcome. It creates a contract for learning that teams can validate with evidence, not opinion. Lean practice formalised this approach through the build–measure–learn loop, where teams move quickly from idea to experiment to insight.² The same loop works in contact centres, field service, and digital support because service moments lend themselves to rapid observation and iteration. Service hypotheses align discovery with delivery. The statement keeps the team focused on value, not volume. The format also supports traceability because leaders can link hypotheses to backlog items, releases, and benefits tracking. Executives should require that all significant items in the service backlog originate from a named hypothesis with a measurable target.

How should a service backlog work?

A service backlog is a single, transparent source of planned work across channels and functions. Scrum calls this the product backlog and positions it as the unique, ordered list that maximises value.¹ That clarity matters in service settings where operational work competes with improvement work. The backlog must carry both customer-facing changes and enabling work, such as data quality, agent tooling, or policy simplification. Each item in the backlog should reference the parent hypothesis, the outcome it aims to move, the expected effort, and the dependencies. Teams should order by value and risk, not by the loudest stakeholder. Regular backlog refinement brings service design, operations, technology, and compliance into the same conversation. This cadence creates shared context and reduces rework. High quality backlogs make strategy tangible since leaders can see trade-offs in one place and align funding to the next most valuable slice.

What outcomes matter in modern service operations?

Executives should frame service outcomes around customer value, reliability, and cost to serve. Outcome clarity prevents teams from optimising local metrics that do not help the customer. The Double Diamond model from the Design Council helps teams move from problem framing to delivery with discipline, which keeps outcomes central from the start.³ In practice, leaders can define north-star outcomes like first contact resolution, effort score, cycle time, and safe compliance, then connect them to financial value. Outcome-based planning complements experience metrics such as Net Promoter Score, which measures the likelihood of advocacy when customers consider the whole experience.⁶ By blending reliability measures with perception measures, organisations see both the service reality and how customers feel about it. Strong outcome definitions also enable scenario planning because teams can model the impact of volume changes, policy shifts, or new channels on the same scoreboard.

How do we link hypotheses to backlog items?

Teams connect hypotheses to backlog items through acceptance criteria that directly reference the intended outcome. A single hypothesis can spawn a small set of thin slices that progress from lowest-risk learning to higher-impact delivery. This structure allows leaders to stop or amplify work based on evidence. The Scrum Guide reinforces the value of small, inspectable increments that can be reviewed with stakeholders at regular intervals.¹ Service teams can mimic that cadence by demonstrating new conversation flows, revised knowledge articles, or policy adjustments in review forums that include frontline leaders. Each demonstration should surface the evidence gathered and the next decision. This rhythm turns governance into guided learning rather than stage-gate policing. Clear traceability from hypothesis to item to increment also strengthens benefits realisation because finance partners can audit how changes contributed to the service scorecard.

How do we measure impact with OKRs and service metrics?

Organisations translate outcomes into Objectives and Key Results to align leadership intent with team execution. OKRs set a qualitative objective, then bind it to a small set of quantitative results that define success.⁴ In service transformations, OKRs connect directly to hypotheses and backlog items so a team’s weekly work moves quarterly outcomes. Service metrics provide the feedback loop. Leaders should pair flow metrics like lead time and throughput with quality metrics like error rates and escalation rates to prevent local optimisation. Little’s Law provides a simple relation among average work-in-progress, throughput, and cycle time.⁵ This relation helps leaders reason about queues and capacity in contact centres and back offices. When teams visualise the system with this relation in mind, they pull less work into progress, finish faster, and reduce customer wait. Executives should require every initiative to declare the baseline, the target, and the data source before work begins.

How do we manage flow and risk in a service backlog?

Service leaders manage flow by limiting work-in-progress, setting review cycles, and guarding quality gates that protect customers. Flow thinking reduces hidden queues that create long lead times and erode satisfaction. Kanban practices apply well in this context because visualising work, limiting WIP, and managing policies are compatible with complex service operations.⁵ Risk sits beside flow. Teams must identify regulatory, privacy, and safety risks early, then mitigate through experiments that learn safely. Service blueprinting supports this by mapping frontstage interactions and backstage dependencies across time. The technique helps teams test changes in one area without breaking another.³ Leaders should promote a culture where agents and designers raise risks early without penalty. The backlog then becomes the place where mitigation tasks live next to feature work. This integrated view reduces surprises and keeps delivery balanced across value, risk, and speed.

What operating model enables continuous learning?

Executives enable continuous learning by hardwiring a few structural elements. First, they sponsor cross-functional teams that own a customer journey segment end to end. Second, they run a dual track where discovery and delivery work together, not sequentially. Third, they invest in measurement and analytics so teams can see cause and effect quickly. The Lean Startup loop provides the discipline to turn these structures into motion: build, measure, learn, repeat.² The Scrum events provide the scaffolding for collaboration and inspection that maintains accountability.¹ Service blueprinting and the Double Diamond give discovery its rigor so teams frame the right problem before scaling delivery.³ OKRs bind the pieces together so strategy flows into work and results flow back into strategy.⁴ When leaders set these elements as the standard way of working, continuous improvement stops being a project and becomes the operating system.

What is the first move for leaders?

Leaders start by naming the outcomes that matter, then funding hypotheses rather than projects. This signal changes how teams plan, how they prioritise, and how they report. The next move is to consolidate improvement work into a single backlog and enforce the rule that every item traces to a hypothesis with a testable result. The third move is to shift governance from stage approvals to regular reviews that examine increments, evidence, and next decisions. The fourth move is to align incentives with OKRs that reward measurable improvements in service outcomes. These steps sound simple because they are simple. The discipline sits in consistency. When leaders reinforce these moves, teams stop shipping output and start shipping outcomes. The organisation then improves customer satisfaction, reduces operating cost, and increases resilience because learning compounds across cycles. The service system gets faster, safer, and more humane.

FAQ

What is a hypothesis in Customer Science service transformation?

A hypothesis is a testable belief that a change will improve a defined outcome. It names the customer, the problem, the proposed change, and the expected result, then drives an experiment through the build–measure–learn loop.²

How does a service backlog differ from a task list?

A service backlog is a single, ordered source of work that maximises value and ties each item to an outcome and parent hypothesis, following Scrum guidance on product backlogs.¹

Which outcomes should Customer Science clients prioritise?

Clients should focus on customer value, reliability, and cost to serve, supported by discovery frameworks like the Double Diamond and perception metrics like Net Promoter Score.³ ⁶

Why link OKRs to hypotheses and backlog items?

Linking OKRs to hypotheses and backlog items aligns leadership intent with team execution and provides measurable results that confirm impact on service outcomes.⁴

How can Little’s Law improve contact centre flow?

Little’s Law relates average work-in-progress, throughput, and cycle time. Managing WIP with this relation shortens lead times and reduces customer waiting.⁵

Who should attend service review sessions?

Service reviews should include product owners, service designers, frontline leaders, operations, technology, and compliance to inspect increments and decide next steps, consistent with Scrum’s inspect-and-adapt principle.¹

What first step should Australian enterprises take with Customer Science?

Set clear service outcomes, fund hypotheses instead of projects, and consolidate all improvement work into a single backlog that traces each item to a measurable result.

Sources

The Scrum Guide — Ken Schwaber, Jeff Sutherland — 2020 — Scrum Guides. https://scrumguides.org/scrum-guide.html

The Lean Startup: Build–Measure–Learn Principles — Eric Ries — 2011 — The Lean Startup. https://theleanstartup.com/principles

The Double Diamond: A universally adopted design process — Design Council — 2019 — Design Council. https://www.designcouncil.org.uk/our-resources/the-double-diamond/

Set goals with OKRs: Introduction — re:Work by Google — 2016 — Google re:Work. https://rework.withgoogle.com/guides/set-goals-with-okrs/steps/introduction/

Little’s Law — Wikipedia editors — 2024 — Wikipedia. https://en.wikipedia.org/wiki/Little%27s_law

Net Promoter System: About NPS — Bain & Company — 2023 — Bain & Company. https://www.netpromotersystem.com/about/