Why do prototypes stall when they meet real operations?

Executives approve bold proofs of concept, yet too many stall when they enter pilots that must run in the real world. Leaders risk sunk cost, damaged credibility, and confused teams when the handoff from prototype to pilot lacks clear ownership, risk controls, and measurable outcomes. Independent research shows many digital initiatives miss their targets, often because execution hygiene breaks down at transition points between teams and phases.¹ The prototype-to-pilot moment is exactly such a transition. Treating this handoff as an operational change with explicit controls, service readiness, and measurable flow and stability metrics prevents waste and accelerates value.² ³

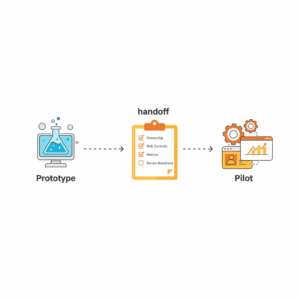

What does “prototype-to-pilot handoff” mean in plain terms?

This handoff moves a working concept from a lab setting into a controlled live trial with real users, data, and service levels. A prototype proves desirability and technical feasibility in isolation. A pilot proves operability, risk posture, and business impact in production-like settings. The handoff codifies design intent, expected outcomes, operational constraints, and service ownership so that the pilot behaves like a small, real product. Structured handoffs align to recognized practices in innovation management, which require defined processes to move ideas into value-creating operations.⁴ ⁵

How do we guard against risk while keeping speed?

Leaders protect customers and systems by applying lightweight but formal controls for change, configuration, and security. Change enablement practices in modern service management focus on safe throughput rather than gatekeeping, which fits pilots that must evolve quickly while remaining auditable.⁶ ⁷ NIST’s control families for configuration and change analysis provide a common language for impact assessment, traceability, and rollback, even at small pilot scale.⁸ ⁹ The right posture is pragmatic: use the minimum controls needed for safety, learn fast, and document decisions.

What outcomes should every pilot prove before scale?

Executives should require four outcomes: operational stability, user adoption, business effectiveness, and compliance readiness. DORA metrics, which correlate delivery performance with organizational outcomes, give a compact way to measure flow and reliability during pilots. Deployment frequency and lead time track throughput. Change failure rate and time to restore service track stability. High performers that keep these metrics strong are more likely to meet business goals, which justifies using them in pilots as leading indicators.² ³

The Handoff Checklist: what must be true on day zero of the pilot?

Product teams deliver a single “pilot readiness pack” that names owners, codifies risks, and locks service expectations. The pack includes a one-page intent, a RACI, a minimum viable runbook, security and privacy notes, test evidence, and exit criteria. This structure maps to innovation system guidance that stresses documented pathways from idea to implementation with roles, competencies, and governance.⁴ ⁵ Service leaders add a change record, a rollback plan, and configuration baselines so operations can manage incidents if they occur.⁸ ⁹ When this pack is present and approved, the pilot can start with confidence and traceability.⁶ ⁷

Who owns what during the pilot?

The sponsor owns business outcomes and budget. The product manager owns value hypothesis, scope, and roadmap. The service owner owns availability, incident response, and change control in the live environment. The tech lead owns architecture, nonfunctional requirements, and technical debt. The data steward owns data quality, lineage, and privacy obligations. The risk lead owns threat modeling and mitigations. These roles mirror management system guidance that emphasizes defined responsibilities for innovation outputs and supporting processes.⁴ ⁵ Clear ownership avoids ambiguity during incidents and reviews.

How do we define pilot scope and guardrails?

Teams state a clear value hypothesis, target segments, and in-scope journeys. They set nonfunctional baselines such as response time, error budgets, and support hours. They record constraints such as data residency or third-party dependencies. They identify change windows and maintenance rules using change enablement conventions.⁶ ⁷ They publish a rollback trigger that combines objective thresholds, such as consecutive failed changes or breach of error budget, with a time-bound decision window. NIST-style configuration control ensures environment parity and drift tracking from day one.⁸ ⁹

Which documents and assets must the team hand over?

Teams hand over artifacts that operations can run without the original inventors in the room. The pack includes the following items:

Intent brief that defines goals, success measures, and duration.

RACI and contact matrix for sponsor, product, service, tech, data, risk.

Architecture sketch with data flows, trust boundaries, and dependencies.

Operational runbook with health checks, alerts, triage steps, and escalation.

Change plan with deployment method, backout steps, and windows.

Configuration baseline and versioned parameters.

Security and privacy notes with identified controls and accepted risks.

Test evidence covering happy paths, edge cases, and failure modes.

DORA metric dashboard seed with definitions and starting baselines.² ⁸

These items align with recognized guidance on innovation governance, configuration control, and service change readiness.⁴ ⁶ ⁹

How do we measure pilot success without overburdening teams?

Executives track a compact metric set that blends business outcomes with delivery signals. The business side includes adoption, conversion, or cycle time improvement relevant to the journey under test. The delivery side uses DORA metrics to show whether the team ships safely and learns quickly in production-like settings.² ³ A weekly review compares observed metrics to thresholds. If change failure rate or time to restore degrade beyond limits, the pilot pauses for a corrective action plan. Using widely studied metrics reduces debate and speeds decisions.²

What risks most often derail the handoff?

Pilots fail when teams rely on heroics instead of documented runbooks, when security and privacy checks arrive after deployment, or when success criteria are vague. Broader research into transformation failure points to weak change management and poor tracking of progress as root causes, which appear acutely in this handoff.¹ When leaders insist on explicit ownership, simple change control, and leading indicators, pilots avoid the drift that quietly erodes momentum. In innovation programs, repeatable governance separates experiments that scale from artifacts that gather dust.⁴ ⁵

How do we decide to scale, iterate, or stop?

Decision forums use pre-committed exit criteria anchored to the value hypothesis, risk posture, and operational signals. A pilot advances when it meets the business threshold, holds stability within limits, and shows a viable support model at target scale. It iterates when user value is promising but stability or adoption lags. It stops when outcomes miss, risks outweigh benefits, or support costs will exceed value. Using change enablement artifacts and NIST-style records, the forum captures rationale and learnings for institutional memory.⁶ ⁸

The practical checklist you can lift and use today

Leaders can apply this concise, sequence-friendly checklist at the moment of handoff:

Confirm sponsor, service owner, and on-call roster with a RACI in the readiness pack.

Approve a change record with deployment plan, backout steps, and maintenance window.⁶

Baseline configuration and environment parity with versioned parameters and drift alerts.⁸ ⁹

Publish a runbook with health checks, alert thresholds, and triage playbooks.

Attach security and privacy notes with accepted risks and mitigations.⁸

Stand up a pilot dashboard that shows DORA metrics and business outcomes side by side.² ³

Lock exit criteria and schedule weekly reviews with a time-boxed decision date.

Start with a small blast radius and a named rollback trigger tied to error budgets.

Capture learnings, update artifacts, and archive decisions after each change.⁶

This sequence embeds innovation discipline and operational safety while preserving speed.⁴ ⁶ ⁸

What impact should leaders expect within one quarter?

Executives should expect faster decision cycles, higher pilot credibility with operations, and clearer evidence for scale-up funding. Programs that measure delivery flow and stability improve their odds of meeting organizational goals, which is the point of running pilots in the first place.² ³ Innovation portfolios that institutionalize this handoff reduce waste and move more ideas into production with predictable risk. Over time, common artifacts become templates, and pilot teams spend more effort on customer value and less on chasing approvals. That is how organizations turn experimentation into enterprise capability.⁴

FAQ

How does the Prototype-to-Pilot Handoff Checklist reduce failure risk for digital initiatives?

The checklist forces explicit ownership, simple change control, configuration baselines, and DORA metric visibility at the moment value moves into live settings, which addresses common execution gaps that derail transformations.¹ ² ⁶ ⁸

What are the four DORA metrics we should track during pilots, and why?

Teams track deployment frequency, lead time for changes, change failure rate, and time to restore service. These metrics capture throughput and stability and correlate with better organizational performance, making them ideal leading indicators for pilots.² ³

Which governance references inform this checklist for enterprise use?

The checklist aligns with ISO 56002 innovation management guidance for moving ideas into value, ITIL 4 change enablement for safe throughput, and NIST SP 800-53 configuration and change controls for traceability and risk management.⁴ ⁶ ⁸ ⁹

Who owns the pilot once a prototype leaves the lab?

The service owner holds operational accountability, supported by a product manager for value, a tech lead for architecture and nonfunctional requirements, a data steward for data responsibility, and a risk lead for threats and mitigations. Ownership clarity reduces incident ambiguity and speeds decisions.⁴ ⁶

Which documents must be included in the pilot readiness pack?

Teams provide an intent brief, RACI, runbook, security and privacy notes, test evidence, change plan with rollback, configuration baseline, and a pilot dashboard seeded with DORA metrics and business measures, so operations can run the pilot independently.² ⁴ ⁸

Why does Customerscience recommend pre-committed exit criteria for pilots?

Pre-committed exit criteria tie decisions to business outcomes, stability thresholds, and support viability, which reduces bias and keeps pilots focused on evidence rather than momentum. This practice strengthens portfolio discipline and funding decisions.² ⁶

Which industries benefit most from this handoff model on customerscience.com.au?

Any sector running regulated or customer-facing pilots benefits, including financial services, healthcare, government, and communications, because the model blends innovation discipline with service safety and compliance-ready practices.⁴ ⁶ ⁸

Sources

Why digital strategies fail — Jacques Bughin, Tanguy Catlin, Martin Hirt, Paul Willmott — 2018 — McKinsey Quarterly. https://www.mckinsey.com/capabilities/mckinsey-digital/our-insights/why-digital-strategies-fail (McKinsey & Company)

DORA’s software delivery metrics: the four keys — DORA Community — 2025 — dora.dev. https://dora.dev/guides/dora-metrics-four-keys/ (dora.dev)

Using the Four Keys to measure your DevOps performance — Google Cloud Blog — 2020 — cloud.google.com. https://cloud.google.com/blog/products/devops-sre/using-the-four-keys-to-measure-your-devops-performance (Google Cloud)

ISO 56002:2019 — Innovation management system — Guidance (Overview) — ISO — 2022 — iso.org PDF. https://www.iso.org/files/live/sites/isoorg/files/store/en/PUB100468.pdf (ISO)

ISO 56002 — Innovation Management System Guidance — BSI — 2024 — bsigroup.com. https://www.bsigroup.com/en-GB/products-and-services/standards/iso-56002-innovation-management-system-guidance/ (bsigroup.com)

Change Enablement in ITIL 4 — itsm.tools — 2025 — itsm.tools. https://itsm.tools/change-enablement/ (ITSM.tools)

ITIL 4 Practitioner: Change Enablement — PeopleCert — 2024 — peoplecert.org. https://www.peoplecert.org/browse-certifications/it-governance-and-service-management/ITIL-1/itil-4-practitioner-change-enablement-3794 (peoplecert.org)

SP 800-53 Rev. 5 — Security and Privacy Controls — Ross, Pillitteri, Dempsey, Riddle, Guissanie — 2020 — NIST csrc.nist.gov. https://csrc.nist.gov/pubs/sp/800/53/r5/upd1/final (csrc.nist.gov)

NIST SP 800-53 r5: Configuration Management controls (CM family) — CSF Tools reference — 2024 — csf.tools. https://csf.tools/reference/nist-sp-800-53/r5/cm/ (csf.tools)