Why do CX leaders keep debating real time vs batch?

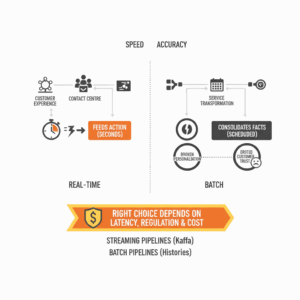

Executives chase speed because customers judge brands in moments. Leaders chase accuracy because decisions fail without trustworthy data. Real-time data feeds action within seconds. Batch data consolidates facts at scheduled intervals. Both approaches solve different problems in customer experience, contact centre operations, and service transformation. The right choice depends on latency tolerance, regulatory expectations, and the cost of computation. Cloud platforms formalise the split. Streaming pipelines process events continuously from message buses such as Apache Kafka. Batch pipelines process large volumes on a schedule and can recompute complete histories to improve accuracy.¹ ²

What is real-time data, precisely?

Real-time data means events are captured, transported, and processed with low latency, often seconds or less, so applications can react quickly. Streaming engines distinguish between the time an event happened and the time a system processed it. This distinction, known as event time versus processing time, allows accurate aggregations even when messages arrive late or out of order. Modern stream processors and libraries support stateful operators, windowing, joins, and exactly-once processing to reduce duplicates and preserve correctness during failures.³ ⁴

What is batch data, and why does it still win?

Batch data consolidates records in groups and processes them at set times. The model favours completeness and recomputation. Teams can rerun the entire pipeline to repair defects, apply new business logic, or backfill features for advanced analytics. Batch semantics often deliver perfect or near-perfect accuracy because all available data is considered during each run. Cloud providers document batch as ideal for heavy transformations, historical reconciliation, regulatory reporting, and model training when latency requirements span minutes to hours.¹ ⁵

How do Lambda and Kappa architectures frame the choice?

Architecture guides the trade. Lambda architecture splits work into a batch layer for accuracy, a speed layer for freshness, and a serving layer for queries. It offers robustness at the cost of duplicate code paths that raise operational overhead.⁶ Kappa architecture simplifies by making streaming the system of record and replays streams to reprocess history, avoiding two separate stacks. Kappa suits event-first platforms where backfills can be achieved by replaying topics.⁷ Both patterns remain relevant, and the better fit depends on reprocessing needs, team skill sets, and tooling maturity.

Where does each fit in the customer experience stack?

Contact centres, digital channels, and service operations contain moments with different latency needs. Routing, fraud screening, and next-best-action require real-time features that refresh within seconds to inform decisioning in the live interaction. Streaming supports these cases by continuously computing features like recent sentiment, queue backlog, or shopping cart context.¹ Customer analytics, billing audits, regulatory reports, and quarterly experience reviews tolerate longer intervals. Batch pipelines shine here by recomputing full-fidelity facts, standardising schemas, and generating trustworthy gold datasets for BI and governance.⁵

How do reliability, data quality, and lineage differ?

Reliability expectations should be explicit. Service level objectives give teams a language for targets such as freshness, completeness, and latency. A freshness SLI expresses the proportion of valid data updated more recently than a threshold, which is critical when balancing speed and trust. Real-time systems emphasise continuity and exactly-once delivery to avoid double counting during failover. Batch systems emphasise reproducibility, deterministic outputs, and lineage from raw to curated layers. When leaders quantify these expectations, they select the right architecture and budget for the reliability that customers will notice.⁸ ⁹ ³

What are the real costs of speed and accuracy?

Cost is a function of compute pattern, storage design, and operational overhead. Streaming spreads compute continuously to maintain low latency and state, which raises long-running costs but enables immediate value capture. Batch concentrates compute into scheduled spikes that can be cheaper to operate but may delay action. Industry practitioners note that while batch appears cheaper initially, streaming can deliver long-term value by reducing customer churn and operational waste when latency truly matters. Balanced portfolios work best. Leaders fund streaming where minutes matter and use batch everywhere else.¹⁰ ¹¹

How should you choose for a specific use case?

Start from the customer moment. Define the decision, the tolerable latency, and the reliability target. If a recommendation must adapt within five seconds to reflect a customer’s latest interaction, choose streaming and set an SLO for end-to-end freshness. If a board report must reconcile revenue perfectly every morning, choose batch and set SLOs for completeness and reproducibility. Avoid blanket mandates. Treat data latency like a product requirement. Where uncertainty remains, prototype both and measure the impact on experience and cost before scaling.⁸ ¹ ⁵

What mechanisms make streaming accurate enough?

Streaming achieves accuracy through four patterns. First, systems use event time to group events into windows that align to when customers actually acted, not when servers processed messages. Second, watermarks and late data handling protect aggregates from stragglers. Third, exactly-once semantics combine idempotent producers with transactional writes to prevent duplicates and gaps. Fourth, stateful operators maintain running aggregates across partitions so insights remain consistent as volume grows. Mature libraries document these capabilities and their operational caveats for production teams.³ ⁴

How do you manage lineage and governance across both modes?

Data leaders simplify lineage with layered zones and clear contracts. Batch pipelines often materialise bronze, silver, and gold layers to document transformations end to end. Streaming pipelines publish schema-managed topics, version schemas, and map stream lineage into the same catalog so consumers can trace fields back to sources. Teams reduce cognitive load by using one transformation framework across both modes where possible, or by enforcing consistent naming, SLIs, and review rituals across tools. Cloud guidance shows how to integrate streaming ETL with data lakes and analytics services without losing governance.⁵ ¹²

How do you measure success and avoid common risks?

Success depends on measurable outcomes. For streaming, track end-to-end freshness, delivery latency, and correctness under failure. For batch, track job success rates, recomputation times, and dataset drift. The main risks include duplicating logic across Lambda layers, over-investing in real time where no customer benefit exists, and under-investing in lineage which erodes trust. SLO thinking aligns engineering effort with business value by making trade-offs explicit and testable in production.⁸ ⁹

What should you implement next?

Leaders implement a portfolio. Use batch for daily reconciliations, regulatory outputs, quarterly CX analytics, and model training. Use streaming for in-session personalisation, contact centre routing, fraud detection, operational alerting, and real-time service dashboards. Standardise definitions, set SLIs for freshness and correctness, and fund the reliability you promise. Align the architecture to the customer moments that matter most. The result is a data platform that moves at two speeds without sacrificing trust.

FAQ

What is the difference between real-time data and batch data in CX?

Real-time data updates continuously with low latency to drive in-session actions, while batch data processes groups of records on a schedule to deliver complete and reproducible outputs for analytics and governance.¹ ⁵

Why would a contact centre choose streaming over batch?

A contact centre chooses streaming when decisions must adapt within seconds, such as next-best-action, fraud checks, or dynamic routing. Streaming keeps features fresh during the live interaction, which improves outcomes that customers notice.¹

Which architecture should we use: Lambda or Kappa?

Choose Lambda if you need separate speed and batch layers and value the flexibility of independent recomputation. Choose Kappa if you want one streaming-centric stack and can replay topics to backfill history. The better choice depends on team skills and reprocessing needs.⁶ ⁷

How do SLOs help manage data freshness and reliability?

SLOs formalise targets for freshness, latency, and completeness. A freshness SLI measures the proportion of valid data updated within a threshold, aligning engineering trade-offs to customer expectations.⁸ ⁹

What controls ensure streaming accuracy in production?

Event time semantics, watermarks for late data, stateful operators, and exactly-once processing combine to reduce duplicates, handle out-of-order events, and maintain consistent aggregates across failures.³ ⁴

Which use cases still favour batch processing?

Regulatory reporting, financial reconciliation, historical analytics, and daily gold-layer builds benefit from batch because jobs can recompute complete histories and deliver deterministic outputs.¹ ⁵

How should Customer Science clients phase adoption?

Start with a use case inventory. Fund streaming where latency under one minute changes customer outcomes. Standardise batch everywhere else. Define SLIs, set SLOs, and trace lineage across both modes to protect trust as you scale.⁸ ¹²

Sources

-

Databricks Documentation. “Batch vs. streaming data processing in Databricks.” 2025, Databricks docs. https://docs.databricks.com/aws/en/data-engineering/batch-vs-streaming

-

Google Cloud. “How cloud batch and stream data processing works.” 2020, Google Cloud Blog. https://cloud.google.com/blog/products/data-analytics/how-cloud-batch-and-stream-data-processing-works

-

Apache Flink. “Notions of Time: Event Time and Processing Time.” 2024, Flink 1.20 Docs. https://nightlies.apache.org/flink/flink-docs-release-1.20/docs/concepts/time/

-

Apache Kafka. “Kafka Streams Core Concepts and Exactly-Once Semantics.” 2024, Kafka 3.3+ Docs. https://kafka.apache.org/33/documentation/streams/core-concepts

-

AWS. “Working with streaming data on AWS.” 2024, AWS Whitepaper. https://docs.aws.amazon.com/whitepapers/latest/build-modern-data-streaming-analytics-architectures/working-with-streaming-data-on-aws.html

-

DZone. “What Is Lambda Architecture?” 2024, DZone. https://dzone.com/articles/lambda-architecture

-

Uber Engineering. “Designing a Production-Ready Kappa Architecture for Stream Processing.” 2020, Uber Engineering Blog. https://www.uber.com/en-AU/blog/kappa-architecture-data-stream-processing/

-

Google SRE. “The Art of SLOs – Participant Handbook.” 2023, Google SRE. https://sre.google/static/pdf/art-of-slos-handbook-a4.pdf

-

Google SRE. “Defining SLOs: Service Level Objectives.” 2016, O’Reilly SRE Book online. https://sre.google/sre-book/service-level-objectives/

-

Confluent. “Stream Processing vs. Batch Processing: What to Know.” 2022, Confluent Blog. https://www.confluent.io/blog/stream-processing-vs-batch-processing/

-

Snowplow. “Batch Processing vs. Stream Processing: What’s the Difference?” 2025, Snowplow Blog. https://snowplow.io/blog/batch-processing-vs-stream-processing/