What do leaders actually mean by “freshness” in a CX context?

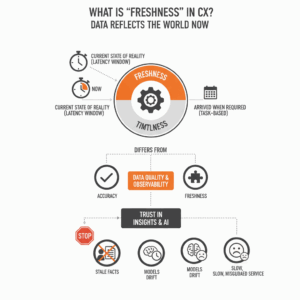

Executives often say “we need real-time data.” Teams then ship a dashboard that updates every hour and declare success. Leaders meant data that reflects the world now. Data freshness is the property that the data available to consumers matches the current state of reality within a defined latency window. Freshness differs from timeliness. Timeliness measures whether data arrived when a task required it. Freshness measures how current the data is relative to the present moment.¹ ³ 18 Freshness sits within data quality and data observability, alongside accuracy and completeness, and it underpins trust in insights and AI.³ 11 When freshness fails, agents act on stale facts, models drift, and customers feel it in slow, misguided service.

Why do most “freshness SLAs” fail before they start?

Leaders write SLAs without precise service levels or measurable indicators. A Service Level Agreement is a promise to customers. It only works when backed by Service Level Objectives and Service Level Indicators that quantify performance. An SLO defines a target range for an SLI, such as “95 percent of batches land within 5 minutes of source creation.”² 10 Without SLIs, you cannot observe or enforce the promise. Teams also skip error budgets. Error budgets quantify how much unreliability a system can afford in a period. They create a control to balance new features with stability work when reliability drops.¹⁹ These omissions produce vague SLAs that no team can operate.

Where does ambiguity creep into freshness definitions?

Ambiguity hides in three places. First, teams conflate ingestion latency with end-to-end availability. A warehouse table that ingests streams in one minute may still take thirty minutes to transform and publish to a BI model. Third parties measure freshness when the insight is consumable, not when the event landed.¹ 3 18 Second, teams forget to anchor freshness to a business clock. A billing run that closes at 23:59 must post all payments by 00:15 to preserve trust. Third, definitions skip lineage. Data lineage tracks how data moves and changes from source to destination. Strong lineage clarifies which upstream feeds govern a consumer’s freshness.⁶ 14 22 Without lineage, disputes over “whose SLA failed” never end.

How do CX and contact center outcomes suffer when freshness slips?

Contact centers live in the stream of customer intent. The value of analytics decays as latency grows. Supervisors use real-time occupancy, queue depth, and sentiment to steer staffing and coaching. Stale views delay intervention and increase abandon rates. Real-time contact center analytics improve transparency and speed response, which reduces handle time and lifts customer satisfaction.²¹ Investment in AI-supported operations compounds the need for current data. AI in contact centers shifts training and decision support to live contexts, and leaders see faster agent proficiency and better outcomes when data is current.⁵ Freshness is not a vanity metric. It is a lever on experience and cost.

What are the most common mistakes with freshness SLAs?

Leaders repeat seven mistakes. Teams define SLAs as statements, not systems, and omit SLIs, SLOs, and error budgets.² 10 19 Teams measure the wrong point in the pipeline and celebrate ingestion while consumers still wait.¹ 3 Definitions ignore lineage, so teams cannot trace breaks.⁶ 14 22 Owners set a single global target and overlook domain variability. Fraud scoring needs seconds; revenue analytics may accept hours. Platform owners skip incident detection and alerting tuned to freshness. Data observability should detect lateness with policies tied to each asset.³ 11 18 Finally, teams publish SLAs without clear runbooks, escalation paths, or consumer communication protocols. CX leaders then learn about a miss from customers, which is too late.

How should you set a crisp, testable freshness SLO?

You start with a canonical SLI that any stakeholder can compute. Use end-to-end data latency measured at the consumer interface. Define it as the time between source event creation and availability in the consumer artifact. Then set an SLO like “99 percent of events become available in the agent desktop metric within 60 seconds, measured per hour.”² 10 For batch, use “95 percent of daily orders appear in the finance mart by 00:15 local.” Tie both to error budgets such as “no more than 30 minutes of SLO violation per week before a release freeze.”¹⁹ Document the clock, the time zone, the data window, and the consumer artifact. Publish a test that computes the SLI so everyone agrees on numbers.

How do you design freshness SLAs that survive real pipelines?

Leaders design for the pipeline they have, not the diagram they prefer. Treat freshness as a product property with architectural backing. Streaming capture reduces source-to-lake latency, but you also need incremental processing and transactional data lakes to publish fast and safely. Technologies like transactional lakehouses support incremental upserts and snapshot isolation, which reduce both latency and data correctness risks.⁴ At scale, firms pair these storage patterns with data quality platforms that monitor and handle incidents across thousands of datasets.¹² You then align transformations, materializations, and serving layers with the SLO, so the end consumer sees the promised freshness.

Which data quality and lineage practices keep SLAs honest?

Data quality frameworks list timeliness, currency, and completeness as critical dimensions. These frameworks guide metric selection and present standard definitions.⁷ 16 23 When you define freshness, reference those dimensions and explain how you will measure them. Pair this with end-to-end lineage that shows sources, transformations, and dependencies. Clear lineage shortens incident triage, proves scope, and supports audits.⁶ 14 22 Lineage also enables policy automation. You can attach a freshness policy to a node and propagate alerts to consumers who depend on it. Observability tools use these pillars to make teams the first to know when data is late, what broke, and how to fix it.¹¹ 18

How do you monitor and alert without fatiguing teams?

Teams burn out when every table pages the same people. Start with asset criticality and consumer impact. Assign higher thresholds to exploratory marts and tighter ones to agent-facing metrics. Use multi-signal detection that combines lateness, volume drops, and schema drift to reduce false positives.²⁰ Use quiet hours and routing rules that page the on-call for critical domains and post to channels for others. Add synthetic freshness checks that read the consumer artifact and validate that the latest event appears. Publish a runbook for “late data” with known failure modes such as stuck schedulers, skewed partitions, or upstream API throttling. Close the loop by recording mean time to detect and mean time to recover for freshness incidents in an error budget report.¹⁹

How do you align SLAs with business value across domains?

A single freshness target across marketing, finance, and service harms all three. Leaders should tie freshness to decision cadence and customer risk by domain. Fraud, routing, and proactive retention need seconds. Workforce management and revenue pacing need minutes. Executive reporting and external filings can accept hours with higher accuracy. Document these profiles and assign cost caps. Real-time everywhere is expensive and unnecessary. Real-time where action is immediate improves outcomes. Contact center use cases show how real-time analytics lift coordination and coaching when teams can see queues and performance instantly.²¹ Industry examples of real-time analytics reinforce the breadth of value when “fresh enough” meets decision cadence.¹³

What metrics prove that freshness investments paid off?

Executives need impact metrics, not just platform telemetry. Track SLO attainment, violation minutes, and error budget burn for freshness.² 19 Track CX metrics where freshness should move the needle: average speed of answer, abandonment rate, first contact resolution, and customer satisfaction. Link changes to incidents and releases. In data platforms, track incident counts, time to detect, and time to recover for lateness. Firms that invest in end-to-end quality systems report higher detection coverage and faster remediation across critical datasets, which protects downstream consumers.¹² Use these metrics to prioritize work and to defend operating budgets.

How do you avoid staleness in AI and analytics programs?

AI models age fast when fed stale data. Model monitoring should include drift and data recency checks. Freshness SLAs at the feature store or data product level keep features aligned with reality. Observability platforms explain “what broke” and “where,” so teams can fix the path rather than mask symptoms.¹¹ 18 For voice and chat analytics, leaders see better agent performance and faster proficiency when training and assistance run on current interaction data.⁵ Freshness is thus both a data quality requirement and a capability that sustains AI value in production.

What next steps create durable freshness SLAs in 90 days?

Leaders can ship a credible baseline in one quarter. Start by inventorying the top twenty consumer artifacts that drive CX, finance, and risk. Define end-to-end latency SLIs for each and set SLOs with error budgets.² 10 19 Map lineage from sources to those artifacts and document owners.⁶ 14 22 Instrument observability on those paths with lateness and volume checks.³ 11 18 Publish dashboards that show SLO attainment and error budget status. Run one game day per month to exercise failure modes and validate runbooks. Close with a business review that connects freshness to customer and cost outcomes. This discipline turns a slogan into a system that executives can steer.

FAQ

What is a data freshness SLA in customer experience programs?

A data freshness SLA is a formal commitment that defines how current data must be when presented to consumers. It is enforced through SLOs and SLIs that measure end-to-end latency from event creation to availability in the consumer artifact.² 10

How is freshness different from timeliness in data quality frameworks?

Freshness measures how closely data reflects the present moment, while timeliness measures whether data arrived when a task required it. Both are recognized quality dimensions, but freshness anchors to “now.”¹ ³ 7 23

Which entities and practices help avoid ambiguous freshness targets?

Use end-to-end SLIs, explicit time zones, and documented lineage from source to consumer. Lineage clarifies dependencies and accelerates triage when freshness slips.⁶ 14 22

Why do contact centers need stronger freshness SLAs than back-office analytics?

Contact centers act in real time. Supervisors and agents rely on current queue, sentiment, and performance data to steer staffing and coaching. Stale data delays action and hurts service metrics.²¹ 5

Which technologies improve both freshness and reliability at scale?

Transactional lakehouse patterns and incremental processing reduce latency while preserving correctness. Large firms pair these with data quality platforms to detect and handle incidents across critical datasets.⁴ 12

How do error budgets make freshness SLAs more credible?

Error budgets quantify acceptable unreliability over a period and trigger a shift from feature work to stability work when budgets are exhausted. This creates governance that keeps SLAs honest.¹⁹

Which sources should a C-level team cite when defining freshness policy?

Leaders should use established SRE guidance on SLOs and error budgets, data quality standards from DAMA and ISO, and reputable resources on data observability and lineage from industry and vendors.² 10 7 17 11 6 22

Sources

-

“What is data freshness? Definition, examples, and best practices,” Metaplane, 2025, Blog. https://www.metaplane.dev/blog/data-freshness-definition-examples

-

“Chapter 2: Implementing SLOs,” Google SRE Workbook, 2023, Book site. https://sre.google/workbook/implementing-slos/

-

“Data Freshness Explained: Making Data Consumers Wildly Happy,” Monte Carlo, 2023, Blog. https://www.montecarlodata.com/blog-data-freshness-explained/

-

“Setting Uber’s Transactional Data Lake in Motion with Apache Hudi,” Uber Engineering, 2023, Engineering blog. https://www.uber.com/en-AU/blog/ubers-lakehouse-architecture/

-

“The contact center crossroads: finding the right mix of humans and AI,” McKinsey & Company, 2025, Article. https://www.mckinsey.com/capabilities/operations/our-insights/the-contact-center-crossroads-finding-the-right-mix-of-humans-and-ai

-

“What is data lineage?,” IBM Think, 2024, Topic page. https://www.ibm.com/think/topics/data-lineage

-

“The Six Primary Dimensions for Data Quality Assessment,” DAMA UK Working Group, 2013, Paper (hosted by SBCTC). https://www.sbctc.edu/resources/documents/colleges-staff/commissions-councils/dgc/data-quality-deminsions.pdf

-

“Data Management Body of Knowledge (DAMA-DMBOK2) Overview,” DAMA International, 2017, Guide excerpt. https://www.dama-dk.org/onewebmedia/DAMA%20DMBOK2_PDF.pdf

-

“25 Use Cases and Examples of Real-Time Analytics,” CallMiner, 2023, Blog. https://callminer.com/blog/25-use-cases-and-examples-of-real-time-analytics

-

“Service Level Objectives,” Google SRE Book, 2023, Book site. https://sre.google/sre-book/service-level-objectives/

-

“What Is Data Observability? 5 Key Pillars To Know in 2025,” Monte Carlo, 2025, Blog. https://www.montecarlodata.com/blog-what-is-data-observability/

-

“How Uber Achieves Operational Excellence in the Data Quality Space,” Uber Engineering, 2021, Engineering blog. https://www.uber.com/en-AU/blog/operational-excellence-data-quality/

-

“3 Benefits of Real-Time Analytics in Your Contact Center,” Brightmetrics, 2023, Blog. https://brightmetrics.com/blog/real-time-analytics-in-your-contact-center/

-

“What is Data Lineage?,” Hewlett Packard Enterprise, 2024, Topic page. https://www.hpe.com/au/en/what-is/data-lineage.html

-

“Dimensions of Data Quality (DDQ),” DAMA Netherlands, 2020, Research paper. https://www.dama-nl.org/wp-content/uploads/2020/09/DDQ-Dimensions-of-Data-Quality-Research-Paper-version-1.2-d.d.-3-Sept-2020.pdf

-

“The 6 Data Quality Dimensions with Examples,” Collibra, 2022, Blog. https://www.collibra.com/blog/the-6-dimensions-of-data-quality

-

“ISO 8000-1:2022, Data quality — Part 1: Overview,” ISO, 2022, Standard. https://www.iso.org/obp/ui/es/

-

“What Is Data Freshness in Data Observability?,” Sifflet, 2025, Blog. https://www.siffletdata.com/blog/data-freshness

-

“Error Budget Policy,” Google SRE Workbook, 2023, Book site. https://sre.google/workbook/error-budget-policy/

-

“End-to-End Data Pipeline Monitoring: Ensuring Accuracy and Latency,” Noel B., 2025, Medium article. https://medium.com/@noel.B/end-to-end-data-pipeline-monitoring-ensuring-accuracy-latency-f53794d0aa78

-

“What is Data Lineage? Techniques, Use Cases, and More,” Alation, 2025, Blog. https://www.alation.com/blog/what-is-data-lineage/