A privacy impact assessment is a critical control for AI projects that use personal or sensitive data. When conducted properly, a PIA reduces regulatory risk, strengthens trust, and enables responsible AI adoption. This guide explains how to conduct a privacy impact assessment for AI projects in Australia, why standard PIAs are not enough for AI, and how agencies can operationalise privacy by design at scale.

What is a privacy impact assessment for AI?

A privacy impact assessment for AI is a structured process used to identify, assess, and mitigate privacy risks arising from AI systems. It examines how personal information is collected, used, shared, and protected across the AI lifecycle.

The core problem it addresses is scale. AI systems process data at volume and speed, often combining multiple datasets and generating inferred information. This magnifies privacy risk compared to traditional systems¹.

An AI focused PIA extends beyond data collection. It considers training data, model behaviour, automation risk, explainability, and downstream use of outputs. This makes it essential for any AI system that influences decisions, recommendations, or service delivery.

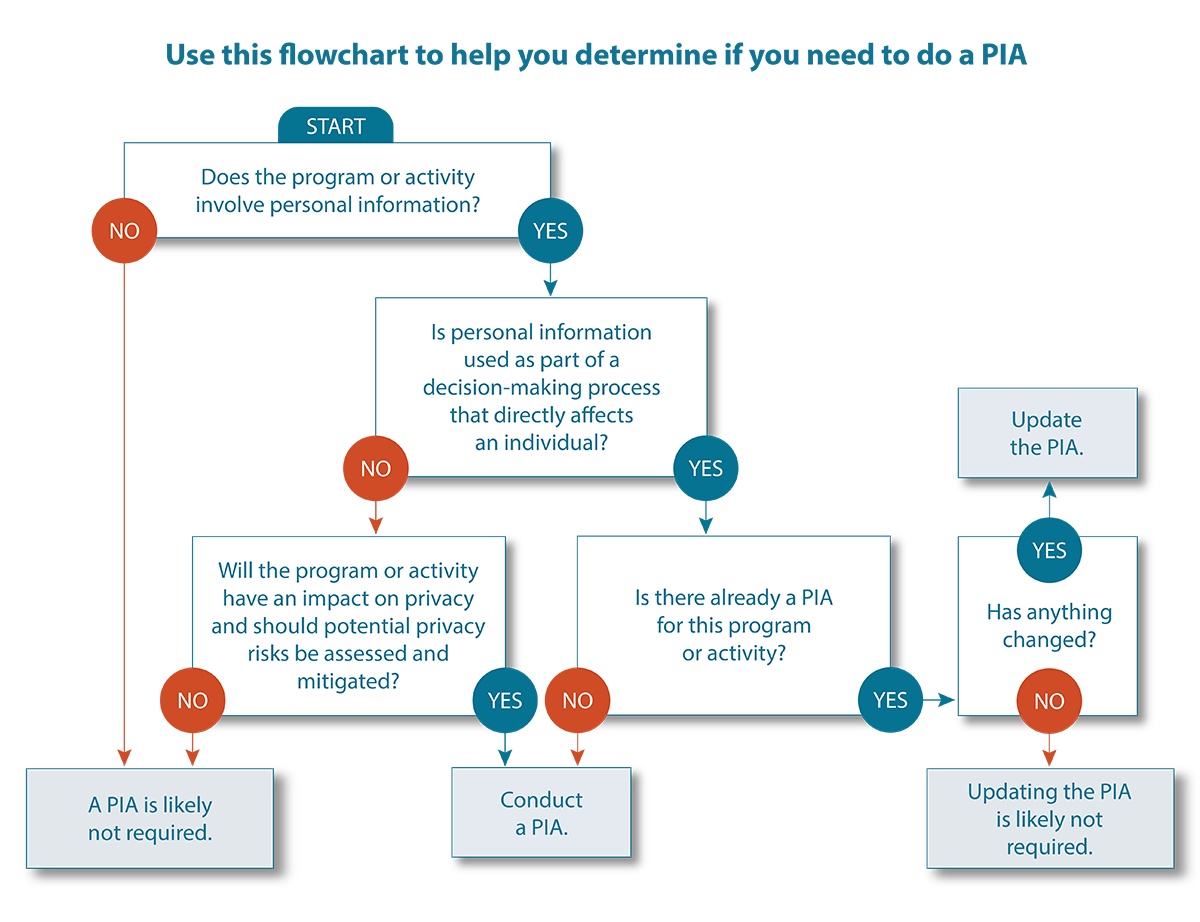

When is a PIA required for AI projects in Australia?

In Australia, PIAs are strongly expected for initiatives that involve new or changed handling of personal information. This expectation applies with particular force to AI projects due to their complexity and potential impact.

Regulatory guidance emphasises PIAs for high risk initiatives, including automation, data matching, and advanced analytics. AI systems often meet multiple high risk criteria simultaneously².

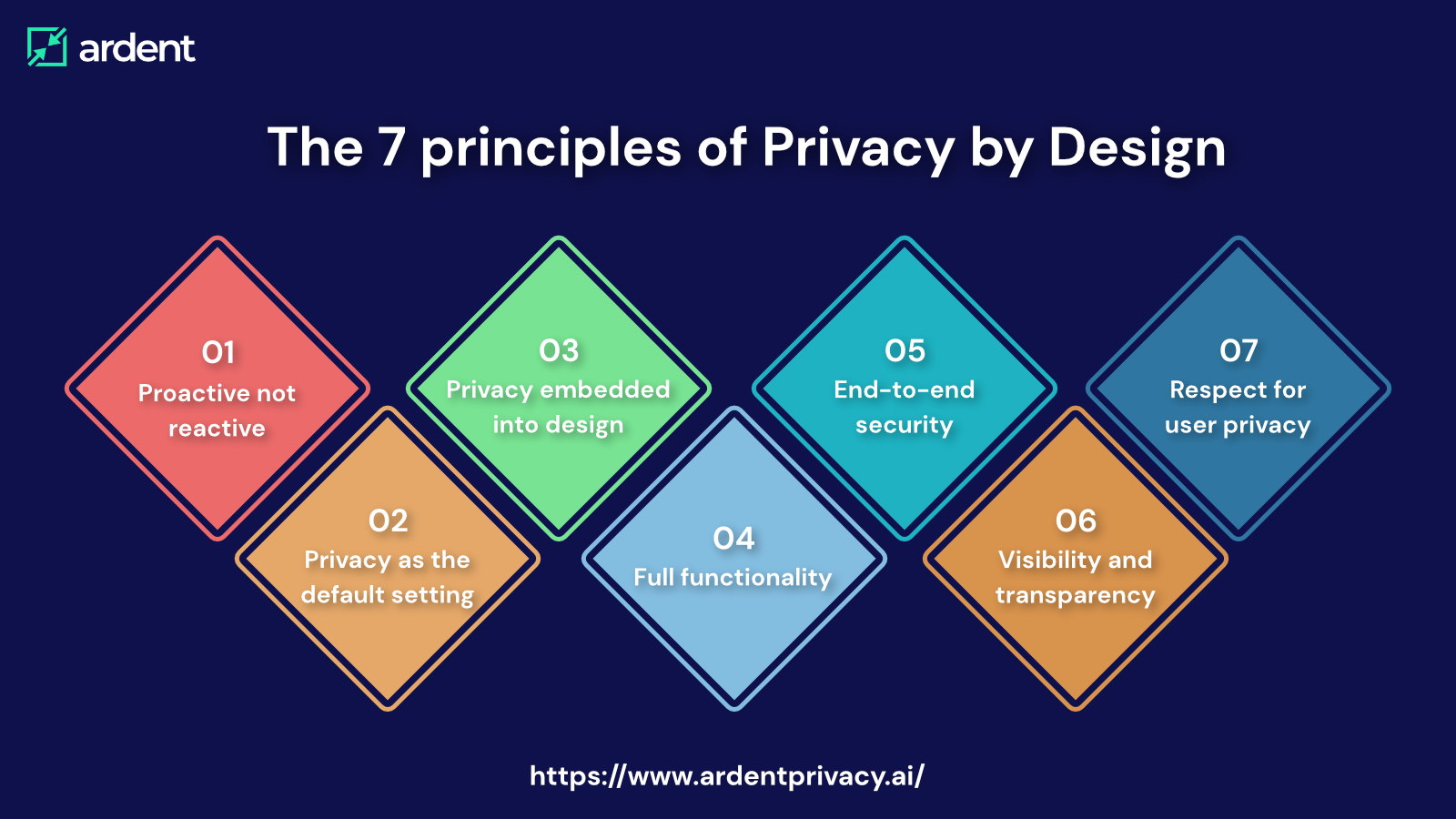

Agencies operating under frameworks led by the Australian Government are expected to demonstrate privacy by design and proportional risk management. A documented PIA provides this evidence.

Why are traditional PIAs insufficient for AI systems?

Traditional PIAs assume deterministic systems with predictable data flows. AI systems behave differently.

Machine learning models may generate new personal information through inference. Outputs may change over time as models are retrained. Decisions may be probabilistic rather than rule based³.

Without adapting the PIA approach, agencies risk overlooking bias, secondary use, and explainability issues that directly affect privacy and fairness.

How does a privacy impact assessment for AI work in practice?

Step 1: Define the AI use case and scope

The assessment must clearly describe what the AI system does, what decisions it supports, and who is affected. Vague or technical descriptions undermine risk identification.

This includes defining whether AI is advisory, decision supporting, or automated, and whether human oversight exists.

Step 2: Map data flows and information sources

AI PIAs require detailed mapping of all data sources, including training data, live inputs, and generated outputs.

Information architecture is critical here. Without clear classification and ownership, privacy risk cannot be reliably assessed.

Step 3: Identify AI specific privacy risks

Risks include unintended inference, bias affecting protected groups, lack of transparency, and over retention of training data⁴.

Each risk should be assessed for likelihood and impact, considering scale and reversibility.

Step 4: Define mitigation and governance controls

Mitigations may include data minimisation, de identification, access controls, human review points, and model monitoring.

Information Management and Protection solutions support this by embedding controls into architecture, workflows, and lifecycle management.

How does a PIA template Australia uses apply to AI?

Australian PIA templates provide a strong baseline but must be extended for AI. Key additions include:

-

Explicit assessment of inferred data

-

Bias and fairness considerations

-

Explainability and transparency controls

-

Ongoing monitoring and reassessment triggers

CX Research and Design services help agencies test AI impacts with real users, ensuring privacy risks are understood in lived experience, not just theory.

What risks arise if AI PIAs are treated as a formality?

The most visible risk is regulatory non compliance. However, the deeper risk is loss of trust.

AI systems that appear opaque or unfair attract complaints, appeals, and media scrutiny. Remediation after deployment is significantly more costly than prevention⁵.

There is also operational risk. Poorly governed AI generates inconsistent outcomes, increasing manual rework and staff uncertainty.

How should agencies measure PIA effectiveness for AI?

Effectiveness should be measured by outcomes, not documentation volume.

Indicators include reduced privacy incidents, lower complaint rates, and stable AI behaviour over time. Evidence of ongoing review is critical, as AI systems evolve.

CommScore AI can help identify emerging privacy concerns by analysing interaction data for signals of confusion, mistrust, or dispute related to AI supported decisions.

What are the next steps for agencies running AI projects?

Agencies should embed PIA activity into AI delivery governance rather than treating it as a pre approval hurdle.

CX Consulting and Professional Services can support development of AI specific PIA frameworks, decision checkpoints, and assurance models.

Knowledge Quest then ensures that privacy obligations, decision logic, and escalation pathways are clearly communicated to frontline staff and citizens.

The objective is confident AI adoption that withstands scrutiny.

Evidentiary Layer

International research consistently highlights privacy impact assessments as a critical control for trustworthy AI. OECD guidance links early privacy risk identification with higher public acceptance of AI enabled services⁶. Australian regulatory reviews similarly emphasise PIAs as a safeguard against systemic privacy failure in data driven programs⁷.

FAQ

What is a privacy impact assessment for AI?

It is a structured assessment of privacy risks arising from AI systems and how those risks are mitigated.

Is a PIA mandatory for AI projects in Australia?

While not always legislated, regulators strongly expect PIAs for high risk AI initiatives.

How does an AI PIA differ from a standard PIA?

It includes assessment of inference, bias, explainability, and ongoing model behaviour.

What is a PIA template Australia commonly uses?

Standard government PIA templates provide a baseline but must be extended for AI risks.

What tools support AI privacy governance?

Knowledge Quest, Customer Science Insights, and CommScore AI support controlled information use and monitoring.

When should a PIA be updated for AI?

Whenever data sources, models, or use cases change.

Sources

-

ISO IEC 42001, Artificial Intelligence Management Systems, 2023.

-

Office of the Australian Information Commissioner, Guide to PIAs, 2020.

-

OECD, AI and Privacy, 2021. https://doi.org/10.1787/1d9b4f5c-en

-

Australian Human Rights Commission, Human Rights and Technology, 2021.

-

Australian National Audit Office, Management of Data Risks, 2020.

-

OECD, Trustworthy Artificial Intelligence, 2019. https://doi.org/10.1787/5e5c1b8e-en

-

Office of the Australian Information Commissioner, Privacy by Design, 2021.