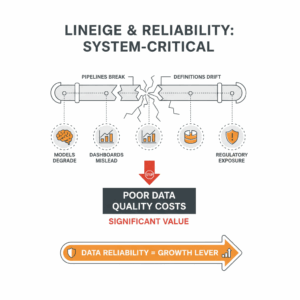

Why do analytics leaders treat lineage and reliability as system-critical?

Leaders treat lineage and reliability as system-critical because decisions, controls, and customer experiences depend on trustworthy data. When pipelines break or definitions drift, models degrade, dashboards mislead, and regulatory exposure increases. Poor data quality costs enterprises significant value each year, which turns data reliability from an IT concern into a board-level risk and growth lever.¹

What do “data lineage” and “data reliability” mean in practice?

Data lineage describes the complete journey of a data element from source to sink, including how that element was created, transformed, and accessed across systems. Lineage captures technical metadata such as jobs, queries, schemas, and run times, and it can include business context such as owners and definitions.² Data reliability means that data consistently meets defined expectations for accuracy, completeness, freshness, consistency, and integrity. Reliability shows up through service-level objectives for datasets, automated monitoring of quality rules, and documented controls that auditors can test.³

Where do most reliability failures originate in modern stacks?

Modern reliability failures originate at the seams between sources, transformations, and consumption layers. Cloud platforms scale quickly, but changing schemas and distributed ownership increase the chance of silent errors. Analysts often detect issues only after customers see broken reports. This gap persists because lineage is incomplete, quality checks are reactive, and change management does not propagate downstream impacts. Cloud-native data services provide lineage and audit features, yet teams underuse them without shared standards and playbooks.⁴ ⁵

How do controls and regulation raise the bar for lineage?

Regulatory frameworks require transparent data flow and reliable reporting. Financial institutions operate under principles that demand accurate risk aggregation and timely reporting, which implicitly depend on provable lineage and quality controls.⁶ Public companies must maintain effective internal control over financial reporting, which includes traceable data transformations and monitored access.⁷ Security standards also emphasize integrity controls that detect unauthorized changes to data and code, making lineage and reliability part of assurance, not just analytics.⁸

How should we structure a practical reliability system for analytics?

Teams should structure a reliability system as a product with clear objectives, versioned standards, and automated enforcement. Define dataset-level service objectives for freshness and quality, then implement continuous monitoring. Trigger incident workflows when thresholds breach, and close the loop with post-incident reviews. Connect lineage to every quality rule and every incident to show exactly which sources and consumers were affected. Cloud lineage features and open standards help standardize this approach across warehouses, lakes, and BI tools.² ⁴ ⁹

What is a minimum viable lineage model that still scales?

Start with three layers of lineage. First, capture source-to-landing lineage that shows origins, owners, data classifications, and ingestion methods. Second, map transformation lineage for jobs, versioned code, dependencies, and data contracts that define schema and semantics. Third, expose consumption lineage for dashboards, machine learning features, and APIs. Add run-time metadata such as execution status, volumes, and durations for each layer. Use an open lineage standard where possible to reduce vendor lock-in and improve interoperability across tools.⁹

How do we define reliability through service objectives and SLAs?

Define reliability through explicit dataset service-level objectives. For example, set freshness SLOs that state data must be updated by specific times, completeness SLOs that target minimum row counts or null thresholds, and accuracy SLOs that benchmark rules against reference sets. Express each SLO as a measurable target and track it with automated monitors. Report service-level indicators to a shared observability layer so leaders and auditors can review performance and exceptions. The concept mirrors site reliability engineering for software and makes reliability a measurable contract.³ ¹⁰

Which monitoring controls create early, actionable signals?

Monitoring controls should include schema change detection, volume and distribution checks, primary key uniqueness, referential integrity, and business rule validations. Add freshness checks at each hop, not only at the warehouse boundary. Pair runtime checks with data contract enforcement at deployment time, including required fields, allowed value ranges, and version compatibility. Require owners to annotate changes with impact notes, then notify downstream subscribers through the lineage graph. These controls shorten mean time to detect and reduce customer-facing incidents.⁹ ¹⁰

How do we measure and improve data reliability performance?

Measure performance through incident rate, time to detect, time to resolve, SLO breach rate, and consumer trust scores. Track the percent of enterprise-critical datasets with full lineage coverage and active monitors. Use post-incident reviews to add new checks, update contracts, and correct ownership gaps. Publish monthly reliability reports that highlight trends and remediation progress. The practice aligns analytics reliability with the operational rigor of software reliability, which improves predictability and reduces rework costs at scale.¹⁰

What roles and operating model keep lineage living, not static?

Successful operating models assign clear responsibilities. Data product owners define SLOs and contracts. Platform teams provide lineage collection, quality services, and observability. Governance teams codify standards and ensure audit readiness. Risk and security functions test integrity and access controls. Business stakeholders sponsor the most critical datasets and agree on definitions. This unit moves faster when leaders treat lineage and reliability as product capabilities with roadmaps, budgets, and outcomes, not as one-off documentation tasks.⁶ ⁷ ⁸

How do we compare tool categories and pick an integration strategy?

Teams should compare native cloud capabilities and independent lineage or observability tools. Cloud services offer integrated lineage for their ecosystems, which simplifies adoption for common patterns. Independent or open solutions improve cross-platform coverage, support hybrid stacks, and enable community-driven connectors. A practical strategy combines both: adopt native lineage where it is strong, then standardize on an open lineage model or metadata lake to unify views. This structure avoids fragmentation and supports vendor changes over time.² ⁴ ⁹

What risks increase when lineage or reliability are ad hoc?

Ad hoc lineage and reactive quality raise several risks. Unexplained metric shifts erode executive trust. Compliance findings expose gaps in traceability. Model drift goes unnoticed, producing biased or stale recommendations. Failed change management causes sudden schema breaks. Incidents cascade across dashboards and decision workflows. These risks compound as the data estate grows, which is why leaders formalize reliability with standards, objectives, and automation rather than after-the-fact documentation.⁶ ⁷ ⁸

How do we get started in 90 days without boiling the ocean?

Start with one business-critical decision flow, such as revenue, churn, or claims. Inventory the sources, transformations, and consumers. Define SLOs for the three to five datasets that make or break the decision. Instrument monitors and incident routing. Capture end-to-end lineage, including owners and classifications. Pilot an open lineage backbone to integrate multiple tools. Publish a reliability scorecard and run weekly operations reviews. This targeted approach creates visible wins, produces reusable patterns, and builds the case for scale.⁹ ¹⁰

What outcomes should executives expect from mature lineage and reliability?

Executives should expect faster incident resolution, higher trust in metrics, fewer audit findings, and clearer accountability. Teams recover engineering hours by preventing repetitive investigations and by automating evidence collection for audits. Product managers ship features faster because they rely on stable data interfaces. Customers receive more consistent experiences because operational analytics meets service objectives. Mature lineage and reliability unlock analytics as a dependable business capability, not a fragile set of pipelines.¹ ⁶ ⁷

FAQ

How do data lineage systems help audit readiness for SOX and risk reporting?

Lineage systems trace data from origin to report, showing each transformation, owner, and control. This traceability supports internal control testing and financial reporting obligations under Section 404 and aligns with risk aggregation expectations for regulated institutions.⁶ ⁷

What reliability metrics should a Contact Centre or CX leader track?

Leaders should track freshness SLO achievement for interaction and CRM datasets, completeness and null rate thresholds, incident rate, time to detect, and time to resolve. Publishing these indicators builds trust in service reporting and customer journey analytics.³ ¹⁰

Which open standards simplify lineage across a multi-cloud stack?

OpenLineage provides a vendor-neutral model for capturing jobs, datasets, and runs, which unifies metadata from warehouses, orchestrators, and BI tools. This standard reduces integration effort and helps teams preserve portability.⁹

Why should analytics adopt SRE-style SLOs and SLIs?

SRE practices turn reliability into measurable contracts. Dataset SLOs for freshness, completeness, and accuracy use indicators that teams can monitor and improve. This approach aligns analytics operations with proven software reliability methods.¹⁰

Which cloud-native features accelerate lineage capture today?

Platforms such as BigQuery and Microsoft Purview provide built-in lineage capture and exploration. These features harvest query histories and pipeline metadata to show end-to-end data movement and dependencies.² ⁴

How does data governance link to day-to-day operations?

Governance codifies definitions, ownership, and controls, while operations apply monitors, incidents, and change management. The connection comes alive when SLOs, lineage, and contracts make policies observable and auditable within daily workflows.⁶ ⁷ ⁸

What first step delivers value in 30 to 60 days?

Pick one critical metric, instrument lineage and SLOs for its core datasets, and automate quality checks at each hop. Publish a scorecard and review incidents weekly to drive rapid, visible improvements.³ ⁹ ¹⁰

Sources

-

“Seizing the Opportunity in Data Transformation,” McKinsey Analytics, 2019, McKinsey & Company. https://www.mckinsey.com/capabilities/quantumblack/our-insights/seizing-the-opportunity-in-data-transformation

-

“Data lineage overview,” Google Cloud BigQuery Documentation, 2023, Google Cloud. https://cloud.google.com/bigquery/docs/data-lineage-intro

-

“Data Quality Assessment and Measurement,” ISO 8000 overview, 2021, International Organization for Standardization. https://www.iso.org/standard/81197.html

-

“What is data lineage in Microsoft Purview,” 2024, Microsoft Learn. https://learn.microsoft.com/azure/purview/concept-data-lineage

-

“Data contracts for reliable data pipelines,” 2022, Monte Carlo Data Blog. https://www.montecarlodata.com/blog/data-contracts

-

“Principles for effective risk data aggregation and risk reporting (BCBS 239),” 2013, Bank for International Settlements. https://www.bis.org/publ/bcbs239.htm

-

“Sarbanes–Oxley Act Section 404: Management Assessment of Internal Controls,” 2002, U.S. Securities and Exchange Commission. https://www.sec.gov/spotlight/sarbanes-oxley.htm

-

“Security and Privacy Controls for Information Systems and Organizations, SP 800-53 Rev. 5,” 2020, NIST. https://csrc.nist.gov/publications/detail/sp/800-53/rev-5/final

-

“OpenLineage Specification,” 2024, OpenLineage Project. https://openlineage.io/spec/

-

“Site Reliability Engineering: Measuring and Managing Reliability,” 2016, Beyer, Jones, Petoff, and Murphy, Google. https://sre.google/sre-book/service-level-objectives/