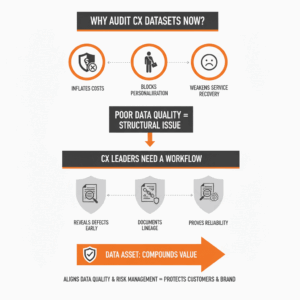

Why audit customer experience datasets now?

Executives face mounting risk when CX decisions rely on inconsistent, stale, or opaque data. Poor data quality inflates costs, blocks personalisation, and weakens service recovery. Harvard Business Review reported IBM’s estimate that bad data costs the U.S. economy 3.1 trillion dollars annually.¹ This scale signals a structural issue, not a tactical nuisance. CX leaders need a workflow that reveals defects early, documents lineage, and proves reliability. The goal is simple. Build an audit method that any team can run, repeat, and scale across channels, journeys, and vendors without changing your core platforms. Strong audits convert data from a liability into an asset that compounds value with each interaction. Organizations that align data quality with risk management frameworks improve trust in AI and analytics outcomes, which protects both customers and the brand.² ³ (hbr.org)

What defines “good” CX data in enterprise environments?

CX data quality measures the fitness of customer data for its intended operational and analytical use. The ISO/IEC 25012 model defines fifteen characteristics across inherent and system dependent dimensions, including accuracy, completeness, timeliness, and traceability.⁴ ⁵ This structure helps leaders set objective acceptance criteria for contact center transcripts, journey events, survey responses, knowledge articles, and CRM profiles. A clear definition prevents teams from chasing vanity metrics. It also anchors audits in shared language across compliance, security, and engineering. Strong programs add lineage, which is the recorded relationship between datasets, jobs, and runs as data flows through pipelines. Open standards for lineage improve interoperability across your cloud stack and tools, which shortens incident resolution times and accelerates model iteration.⁶ ⁷ (iso.org)

How should leaders scope a CX data audit?

Leaders start with a single journey and a single question. Pick a high value scenario such as “Why do first contact resolutions decline for billing inquiries?” Then constrain scope to the smallest set of systems that can answer it. Define entities upfront. For example, “conversation,” “customer,” “case,” “agent,” and “resolution” each need stable identifiers. Decide time windows that align with operational rhythm, such as the last two quarters. Agree on unit tests and acceptance thresholds derived from ISO characteristics. Decide which lineage events must be captured for every run. Choose a reporting cadence that executives will read. Scoping with this discipline avoids analysis paralysis. It also improves change control because every new dataset must declare its purpose and quality targets before it enters the audit. Teams that adopt generative AI should align any CX data audit with the NIST AI Risk Management Framework to keep trust requirements explicit.² ⁹ (NIST)

Step 1: Inventory CX data sources and owners

Teams map every dataset that participates in the scoped journey. Capture system, table, and field names. Record business owners and technical stewards. Note PII sensitivity, retention rules, and consent flags. Flag vendor exports and flat files that bypass standard pipelines because these often hide the worst defects. Keep this inventory living in a catalog and tie it to your control framework. Use a simple checklist. Can we identify a single system of record for each entity. Can we describe the intended use. Can we state the required quality thresholds. This step prevents later disputes about which source is authoritative. It also frames downstream controls such as encryption, masking, and deletion. Catalog entries should reference lineage identifiers to connect owners to pipeline health and change history.⁶ (openlineage.io)

Step 2: Establish lineage for every job, dataset, and run

Engineering teams instrument pipelines to emit lineage events in a common format. Lineage should describe jobs, datasets, and runs with consistent identifiers and extensible facets for schema, column-level links, and ownership. A shared object model reduces tool lock-in and improves traceability across Airflow, Spark, dbt, and real-time integrations. With end-to-end lineage, auditors can answer who changed what, where the field originated, and which downstream models consume it. When an upstream change breaks a metric such as CSAT, lineage narrows the search space from weeks to hours. Standardization matters. Open specifications and their connectors reduce integration effort and support cross-platform governance.⁶ ⁷ ¹¹ (openlineage.io)

Step 3: Define quality rules that match real CX use

Data quality rules must reflect the decision they support. For contact center analytics, enforce permissible values for channel, queue, and disposition. For NPS or CSAT, validate numeric ranges and required timestamps. For identity, check referential integrity between customer, account, and case. Choose rules from the ISO characteristics and translate them into executable tests. Start with accuracy, completeness, timeliness, uniqueness, and consistency. Add traceability and credibility for regulated uses. Keep tests small, atomic, and readable. Each must output a clear pass or fail with measured severity. Publish thresholds by dataset and field so product teams and analysts know what is fit for purpose. This alignment keeps audits practical and prevents rule creep that generates noise instead of insight.⁴ ¹⁰ (iso.org)

Step 4: Automate validation and monitor in production

Quality checks must run where data flows. Place validations at ingress, transform, and publish stages. Block loads for critical failures such as missing primary keys or broken date formats. Defer noncritical issues for remediation but log and alert them. Monitor timeliness by measuring late-arriving data relative to SLAs. IBM analysis suggests that outdated training data can reduce revenue through inaccurate predictions, which underscores the need for freshness monitoring.¹² Treat every failure as a ticket with owner, severity, and due date. Trend failure rates and mean time to detect and resolve. Practical teams start with a small set of tests and expand coverage as defects decline. Monitoring lives in the same dashboard as lineage so engineers and analysts see a single source of truth for health.¹² (IBM)

Step 5: Reconcile metrics to business truth

Audits fail if metrics do not reconcile to financial or operational reality. Define canonical calculations for FCR, AHT, NPS, CSAT, deflection, and churn. Reproduce numbers in at least two independent paths, such as warehouse and BI cache. Investigate variance above a set tolerance. Use sampling to match customer-level events to case logs and billing records. Confirm that suppression logic for opted-out customers applies everywhere. Tie each KPI to lineage so changes trigger automatic impact analysis. Reconciliation provides confidence that KPIs used in incentives, budgets, and board packs reflect actual outcomes. It also reveals design defects in upstream systems that no amount of cleaning can fix. This step turns the audit from a technical exercise into a management discipline with clear accountability.

Step 6: Document decisions and close the loop

Teams write a brief decision log after each audit cycle. Record what failed, what passed, and what changed. Capture root causes, such as ambiguous data definitions or missing consent fields. List remediations with owners and dates. Update the catalog, lineage records, and test suite to reflect new truth. Share findings with customer operations and legal so policy changes do not drift from data reality. Align any generative AI use with the NIST AI RMF functions of govern, map, measure, and manage. The outcome is a repeatable, evidence-based practice that scales across journeys, products, and regions.² ⁹ ¹³ (NIST)

How do you compare lineage and catalog options?

Leaders face a choice between proprietary platforms and open standards. Proprietary tools often speed initial deployment. Open standards protect flexibility and total cost of ownership by enabling cross-tool interoperability. An open lineage spec defines a stable object model with jobs, datasets, and runs, plus facets for enrichment. This approach reduces duplication of effort across teams and accelerates ecosystem adoption.⁶ ⁷ Many enterprises combine a commercial catalog with open lineage to balance governance and portability. The best choice is the one that your engineers will instrument and your analysts will use. Decision criteria should include connector coverage, ease of policy enforcement, and fit with existing security models. Clear criteria avoid vendor-led implementations that miss business intent. (openlineage.io)

What risks should CX leaders manage during audits?

Leaders must manage privacy and fairness risks alongside technical ones. Data audits touch consent, retention, and sensitive attributes. Apply privacy by design and minimize fields that do not contribute to the decision. Use role-based access and masking for analyst sandboxes. Add fairness checks for model-driven routing or offers. Tie these controls to the AI risk framework so the same controls appear in governance, model cards, and incident playbooks. NIST’s framework provides a shared vocabulary for validity, reliability, safety, security, and explainability.² ²¹ Treat risk as part of product management, not as an afterthought. Clear safeguards protect customers, reduce regulatory exposure, and preserve the license to operate AI-enabled CX. (NIST)

How do you measure impact from a data audit?

Executives need proof. Track reductions in defect rates, time to detect, and time to resolve. Track increases in on-time data delivery and test coverage. Link improved data freshness to uplift in predictive performance and revenue. IBM reports that reliance on outdated data can lead to measurable revenue loss, which creates a clear economic case for investment.¹² Tie audit outcomes to CX metrics such as FCR, CSAT, and churn so business sponsors see value. Share a quarterly scorecard that lists datasets by health, ownership, and risk posture. Measure adoption of lineage coverage across critical pipelines. Publish wins such as faster root cause analysis and fewer false escalations. Over time, the audit becomes a habit that sustains service transformation.

Which practical playbook should teams follow next?

Start with a 60-day sprint. In weeks 1 to 2, scope the journey, define entities, and inventory sources. In weeks 3 to 4, implement lineage events across two critical pipelines. In weeks 5 to 6, codify ten priority data quality tests and enable alerting. In weeks 7 to 8, reconcile two KPIs to business truth. In weeks 9 to 10, run a full audit cycle, capture decisions, and publish a scorecard. In weeks 11 to 12, expand coverage and retire duplicate sources. This cadence builds momentum while containing risk. It also gives executives regular proof that the customer data foundation is strengthening. Tie the sprint to the NIST AI RMF functions so your CX audit and AI governance evolve together rather than in parallel.² ⁹ (NIST)

FAQ

What is a CX data audit in practical terms?

A CX data audit is a repeatable process that inventories datasets, establishes lineage for jobs, datasets, and runs, codifies ISO-aligned quality rules, automates validation in production, reconciles metrics to business truth, and documents decisions.⁴ ⁶

Why should enterprise leaders use ISO/IEC 25012 for CX data quality?

ISO/IEC 25012 provides a standard model with fifteen quality characteristics that translate directly into executable rules for accuracy, completeness, timeliness, and traceability, which stabilizes definitions across teams and systems.⁴ ⁵

Which lineage standard supports cross-platform CX pipelines?

OpenLineage defines a common object model for jobs, datasets, and runs with extensible facets, enabling consistent lineage across tools like Airflow, Spark, and dbt to improve traceability and incident resolution.⁶ ⁷ ¹¹

How does the NIST AI Risk Management Framework relate to CX audits?

NIST AI RMF offers a shared vocabulary and functions to govern, map, measure, and manage AI risks. Aligning audits to this framework strengthens trust, reliability, and compliance for analytics and AI-driven CX decisions.² ⁹

Which metrics prove that audits create value for CX?

Track defect rates, on-time data delivery, test coverage, and lineage coverage. Connect improvements to CX outcomes such as FCR, CSAT, and churn. IBM analysis links outdated data to measurable revenue loss, which underscores the business case.¹²

Who should own the CX data audit?

Business owners and technical stewards should co-own the audit. Owners define intent and thresholds. Stewards implement lineage, tests, and monitoring. Both groups should use a shared catalog and decision log tied to risk frameworks.² ⁴ ⁶

Which steps deliver impact in the first 60 days?

Scope one journey and one question, implement lineage on two pipelines, codify ten priority tests, reconcile two KPIs, then run a full audit cycle with a published scorecard. Align each step to NIST AI RMF functions to maintain trust.² ⁹

Sources

-

“Bad Data Costs the U.S. 3 Trillion Per Year,” Thomas C. Redman, 2016, Harvard Business Review. https://hbr.org/2016/09/bad-data-costs-the-u-s-3-trillion-per-year

-

“AI Risk Management Framework,” Elham Tabassi et al., 2023, NIST. https://www.nist.gov/itl/ai-risk-management-framework

-

“Artificial Intelligence Risk Management Framework (AI RMF 1.0),” NIST, 2023, NIST Publication NIST AI 100-1. https://nvlpubs.nist.gov/nistpubs/ai/nist.ai.100-1.pdf

-

“ISO/IEC 25012:2008 Data quality model,” ISO, 2008, International Organization for Standardization. https://www.iso.org/standard/35736.html

-

“ISO/IEC 25012,” 2023, ISO 25000 Portal. https://iso25000.com/index.php/en/iso-25000-standards/iso-25012

-

“About OpenLineage,” OpenLineage Project, 2025, OpenLineage Documentation. https://openlineage.io/docs/

-

“Why an Open Standard for Lineage Metadata?,” OpenLineage Project, 2023, OpenLineage Blog. https://openlineage.io/blog/why-open-standard/

-

“Data Quality Certification using ISO/IEC 25012,” F. Gualo et al., 2021, arXiv. https://arxiv.org/pdf/2102.11527

-

“Artificial Intelligence Risk Management Framework: Generative AI Profile,” NIST, 2024, NIST AI 600-1. https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.600-1.pdf

-

“Data quality certification using ISO/IEC 25012: Industrial experiences,” F. Gualo et al., 2021, Journal of Systems and Software. https://www.sciencedirect.com/science/article/abs/pii/S0164121221000352

-

“OpenLineage | OpenMetadata Data Lineage Pipeline,” OpenMetadata Docs, 2025. https://docs.open-metadata.org/latest/connectors/pipeline/openlineage

-

“The real cost of delayed data in an always-on world,” IBM, 2024, IBM Think Insights. https://www.ibm.com/think/insights/delayed-data-cost

-

“NIST Issues New Artificial Intelligence Risk Management Framework,” Association of Corporate Counsel, 2023, ACC Guidance. https://www.acc.com/sites/default/files/resources/upload/NIST%20Issues%20New%20Artificial%20Intelligence%20Risk%20Management%20Framework.pdf