Why do leaders need a stepwise survey instrumentation plan now?

Executives face noisy signals and rising customer expectations. Teams feel pressure to act, but fragmented surveys, unclear measures, and weak governance produce unreliable data that erodes confidence. A stepwise survey instrumentation plan gives leaders a shared blueprint for how Voice of Customer systems collect, structure, and feed insights into decisions. Survey instrumentation defines what the program measures, how the program captures responses, and how the program ensures quality throughout the lifecycle. The plan connects research standards to operational realities, so findings carry weight with finance, operations, and product. Leaders who codify instrumentation reduce total survey error, which is the combined effect of sampling, nonresponse, coverage, and measurement errors that distort results. Survey methodology research formalized this concept and gives practical ways to design for accuracy.¹ Leaders who anchor to recognized standards raise trust in decisions.

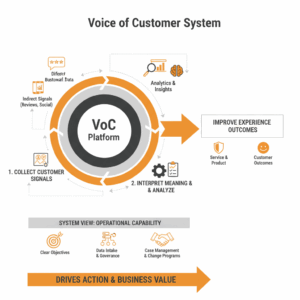

What is “survey instrumentation” in a VoC system?

Survey instrumentation is the design, governance, and operational framework that turns a question into reliable, decision-grade data. The unit includes five elements. First, a measurement model defines outcomes, drivers, and question constructs. Second, a sampling frame details who is in scope and how to reach them across channels. Third, a mode strategy sets when to use web, SMS, IVR, email, or assisted interviews. Fourth, a quality framework sets standards for consent, privacy, transparency, and fieldwork controls. Fifth, an integration layer maps identifiers, metadata, and response payloads into analytics pipelines and operational systems. Established survey texts outline how these pieces work together across modes and why mode effects matter.² The Tailored Design Method adds practical guidance on respondent communication, incentives, and follow up to raise cooperation without biasing responses.³

Where should a program start to define measurement with clarity?

Teams start by naming the decisions the survey must inform. The measurement model then maps each decision to constructs and indicators. For relationship health, many firms track advocacy with a likelihood-to-recommend item as a predictor of growth when linked to behaviors and economics. The Net Promoter Score popularized a single-advocacy lens, but it remains one input, not a strategy.⁴ Relationship surveys should pair advocacy with trust, effort, and satisfaction constructs, then link each construct to operational drivers such as wait time, first contact resolution, and product reliability. Experience surveys should focus on a specific journey step with tightly scoped diagnostics. Leaders write each item with unambiguous language, consistent scales, and a clear recall window. Survey methodology guidance recommends keeping scales consistent across waves to support trend integrity and comparability.²

How do you build a defensible sampling and contact design?

Teams build a sampling frame that mirrors the population that the decision covers. For relationship surveys, the frame often equals all active customers meeting tenure and activity rules. For journey surveys, the frame equals customers who experienced the target event within a defined window. Sampling then allocates invitations to achieve precision targets while managing contact burden. Mode choices matter. Web and SMS reduce costs and speed cycles, while phone or assisted modes can lift response for underrepresented segments. Mixed mode designs can improve coverage and reduce nonresponse when done with consistent wording and layout across modes.³ Research texts explain how mode effects arise and how to test for differences before consolidating results.² Leaders should record inclusion and exclusion rules in a contact policy that sets frequency caps and cooling periods to respect customers and protect data quality.

What should ethical and quality standards include in day-to-day practice?

Programs should adopt recognized research standards and encode them in daily operations. ISO 20252 sets service requirements for market, opinion, and social research providers across the full project lifecycle.⁵ National research bodies explain how this standard supports consistency, transparency, and confidence in outputs.⁶ ESOMAR and GRBN publish practical guidelines for primary data collection that define responsibilities for consent, transparency, data minimization, and duty of care.⁷ These guidelines also recommend disclosing sample sources, fieldwork dates, response rates, and weighting so stakeholders can judge fitness for purpose.⁷ Leaders operationalize these principles through approval workflows, fieldwork checklists, and audit trails. Compliance should not slow action. Clear templates for privacy notices, opt out handling, and data retention reduce cycle time while protecting participants. Consistent disclosure strengthens credibility with regulators and customers.

How do you design the instrument to reduce total survey error?

Teams design instruments to minimize cognitive burden and ambiguity. Start with a logical flow from screening to outcomes to drivers to open text and demographics. Keep wording concrete and avoid double-barreled constructs that mix concepts. Use balanced scales with labeled endpoints and, when needed, midpoint labels. Pilot the instrument with cognitive interviews to observe comprehension and timing. Apply A/B tests on subject lines, contact times, and reminders to improve cooperation rates without bias. The Tailored Design Method documents methods to reduce reluctance and increase trust through design choices in contacts and layout.⁸ Visual design should adapt to small screens because most customers respond on mobile. Randomize attribute lists to avoid order effects. Limit open text to a focused prompt and support it with optional structured diagnostics. Maintain scale consistency across waves to preserve trend comparability.²

How do you implement data capture and integration that analysts trust?

The integration layer must carry the context that gives a response meaning. Each record should include a unique person or account identifier, event metadata such as channel and timestamp, and operational measures like handle time or resolution status. This structure supports driver analysis, cohort tracking, and frontline alerting. Use event-driven architecture to trigger invitations from system of record events rather than static lists. Store raw response payloads with immutable audit metadata. Apply standard validation checks for duplicate records, out-of-range values, and straight-lining patterns. Tag every transformation in a data lineage log. Map survey scales into normalized analytical fields so dashboards can compare across journeys. Adopt reference data for business units, products, and segments to enable rollups. Align data retention and deletion with privacy policies. These practices express ISO 20252 service controls in technical operations.⁵

Which comparisons help executives interpret survey outcomes responsibly?

Executives benefit from layered comparisons that separate trend, mix, and case-mix effects. First, show rolling trends with confidence intervals by business unit. Second, normalize for channel or product mix shifts that would otherwise distort the picture. Third, break out new versus existing customers because tenure shapes expectations. Fourth, compare self-service and assisted journeys to highlight where digital design changes improve resolution and reduce effort. Fifth, relate relationship health to behavioral and financial outcomes. The original advocacy research argued that firms with more promoters grow faster than firms with more detractors when supported by disciplined follow through.⁴ Leaders should test this link with local data rather than assuming a universal effect. Add diagnostics from total survey error models to flag when mode shifts or response composition could explain movements.² This disciplined interpretation prevents reactive swings.

What risks can derail a VoC survey program and how do you mitigate them?

Programs stumble when they prioritize volume over validity. Over-contacting customers creates fatigue and raises opt out rates. Poor sampling frames misstate the customer base and produce biased insights. Inconsistent scales or wording break the trend, so stakeholders stop trusting the numbers. Weak governance invites privacy and compliance risks. Teams mitigate these risks with a contact policy, a change control process for wording and scales, and a periodic methods review that checks coverage, nonresponse, and weighting. ESOMAR and GRBN guidelines provide practical checklists for disclosure and duty of care.⁷ ISO 20252 provides auditable service requirements and a vocabulary to align vendors and internal teams.⁵ Senior leaders should see a quarterly instrumentation report that surfaces risk indicators such as response composition changes, bounce rates, or mode shifts. This report keeps the system healthy.

How do you measure success beyond response rate?

Programs measure success on four levels. First, method health metrics cover response rate by segment, sample utilization, bounce rate, and opt out rate. Second, data quality metrics cover item nonresponse, time to complete, straight-lining incidence, and variance stability. Third, decision utility metrics cover time from field close to recommendation, percentage of insights implemented, and uplift from closed-loop actions. Fourth, business impact metrics cover changes in retention, cross sell, cost-to-serve, and complaint rates after interventions. Leaders should predefine thresholds that trigger method adjustments. Survey research literature frames this as a continuous process of reducing total survey error while maximizing relevance.² Programs that make these measures visible and routine maintain trust and momentum across the enterprise.

What are the concrete steps to implement survey instrumentation end to end?

Leaders can implement instrumentation in eight steps that align method with operations. Step one sets objectives and governance with a named owner, a change board, and escalation paths. Step two defines constructs, scales, and wording locked in a versioned instrument library. Step three builds the sampling frame rules and contact policy across channels. Step four selects modes and designs the contact sequence with tested templates. Step five pilots the instrument, runs cognitive interviews, and tunes for comprehension and time. Step six deploys event triggers, payload schemas, and data lineage logs. Step seven validates data quality and documents ISO 20252 and ESOMAR compliance artifacts. Step eight publishes interpretation protocols, confidence intervals, and driver analysis methods for analysts and leaders. These steps operationalize recognized research guidance in a repeatable Customer Experience practice.²³⁵⁷

What is the call to action for Customer Experience and Service Transformation leaders?

Leaders should fund instrumentation as core infrastructure, not a project add on. A small central methods team can codify standards, maintain the instrument library, and run periodic audits. Business units can own local questions and action plans within the guardrails. This structure sustains quality at scale and allows rapid experimentation with new journeys or features. Executives should connect survey data to operational systems, case management, and finance so actions have a measurable line of sight to outcomes. Programs that treat survey instrumentation as a living system build credibility with boards, regulators, and customers. The result is a Voice of Customer engine that drives smarter decisions, faster improvements, and stronger growth.

FAQ

What is survey instrumentation in a Voice of Customer system?

Survey instrumentation is the end-to-end framework that governs measurement models, sampling frames, mode choices, quality standards, and data integration so VoC data is reliable and decision ready.²³⁵⁷

How do ISO 20252 and ESOMAR/GRBN guidelines support VoC governance?

ISO 20252 sets service requirements across the research lifecycle, while ESOMAR and GRBN provide practical guidance for ethical primary data collection, disclosure, and duty of care. These standards increase consistency, transparency, and trust.⁵⁶⁷

Which design tactics reduce total survey error in customer surveys?

Teams reduce total survey error by keeping wording unambiguous, aligning scales across waves, using mixed modes thoughtfully, piloting with cognitive interviews, and managing contact sequences using Tailored Design principles.²³⁸

Why should executives avoid over-reliance on a single metric like NPS?

The likelihood-to-recommend item can indicate advocacy, but it should sit within a broader measurement model that includes trust, effort, and satisfaction, then links to behaviors and economics for local validation.⁴

Which data fields are essential for integrating survey responses into analytics?

Each record should include unique identifiers, event metadata, operational measures, and immutable audit fields to support driver analysis, cohort tracking, and frontline alerting within enterprise systems.⁵

How should leaders measure VoC program success beyond response rate?

Leaders should track method health, data quality, decision utility, and business impact metrics to ensure the program reduces error and improves outcomes over time.²

Which first steps help a CX team implement instrumentation quickly?

Start by establishing governance, locking a versioned instrument library, defining sampling and contact policy, piloting the instrument, and aligning integration schemas and compliance artifacts to ISO 20252 and ESOMAR guidance.²⁵⁷

Sources

-

Survey Methodology. Robert M. Groves, Floyd J. Fowler Jr., Mick P. Couper, James M. Lepkowski, Eleanor Singer, Roger Tourangeau. 2009. Wiley Series in Survey Methodology. https://www.wiley.com/en-br/Survey%2BMethodology%2C%2B2nd%2BEdition-p-9780470465462 (wiley.com)

-

Survey Methodology. Robert M. Groves et al. 2009. PDF excerpt. Wiley. https://download.e-bookshelf.de/download/0000/8065/21/L-G-0000806521-0002312179.pdf (download.e-bookshelf.de)

-

Internet, Phone, Mail, and Mixed-Mode Surveys: The Tailored Design Method, 4th Edition. Don A. Dillman, Jolene D. Smyth, Leah Melani Christian. 2014. Wiley. https://www.wiley.com/en-ae/Internet%2C%2BPhone%2C%2BMail%2C%2Band%2BMixed%2BMode%2BSurveys%3A%2BThe%2BTailored%2BDesign%2BMethod%2C%2B4th%2BEdition-p-9781118456149 (wiley.com)

-

The One Number You Need to Grow. Frederick F. Reichheld. 2003. Harvard Business Review. https://hbr.org/2003/12/the-one-number-you-need-to-grow (hbr.org)

-

ISO 20252:2019 — Market, opinion and social research, including insights and data analytics. International Organization for Standardization. 2019. https://www.iso.org/standard/73671.html (iso.org)

-

Quality standards — ISO 20252. Market Research Society. 2025. https://www.mrs.org.uk/standards/quality-standards (Market Research Society)

-

ESOMAR/GRBN Guideline for Researchers and Clients Involved in Primary Data Collection. ESOMAR and GRBN. 2021. PDF. https://www.jmra-net.or.jp/Portals/0/rule/guideline/20211018_ESOMAR_GRBN_Guideline_on_Primary_Data_Collection.pdf (jmra-net.or.jp)

-

Reducing People’s Reluctance to Respond to Surveys. Don A. Dillman. 2014 chapter reference, Wiley Online Library. https://onlinelibrary.wiley.com/doi/abs/10.1002/9781394260645.ch2 (onlinelibrary.wiley.com)

leo.