What is a randomised test and why should CX leaders care?

Randomised tests assign units to treatment and control by chance to estimate a causal effect with minimal bias. This simple mechanism creates comparable groups and turns noisy operational environments into engines for learning.¹ Customer leaders use randomised A/B and multivariate tests to de-risk channel changes, personalise journeys, and validate AI recommendations before scaling.² Replacing opinion with evidence protects brand equity and accelerates time to value. A disciplined test clarifies what works, for whom, and under what conditions. The structure also builds trust across product, marketing, digital, contact centre, and data teams because it provides a clear decision rule. Executives gain a repeatable way to prioritise investments and improve service while maintaining compliance and customer trust.

How do samples and units shape internal validity?

Designers choose the experimental unit that receives randomisation. The unit could be a customer, an account, a household, an agent, or a store. The choice affects bias, variance, governance, and feasibility.³ Sampling then selects which units enter the experiment. Random sampling supports generalisation to a well-defined frame, while operational convenience sampling can still deliver credible within-frame causal effects if the random assignment remains intact.⁴ Clear inclusion rules and pre-registration of outcomes guard against garden-of-forking-paths and selective reporting.⁵ When business logic limits full random sampling, stratification or blocked randomisation can restore balance across key covariates such as segment, tenure, or region.⁶ Stratification reduces chance imbalances and can improve precision when strata predict the outcome.

How does statistical power connect to sample size and Minimum Detectable Effect?

Power is the probability that a test detects a true effect at a chosen significance level. Leaders translate power into a Minimum Detectable Effect, which is the smallest effect your design can reliably detect.⁷ Larger samples, lower outcome variance, and stronger baseline covariates reduce the MDE.⁸ Two-sided tests with 80 percent power at 5 percent alpha remain common defaults for customer experiments, but the optimal choice reflects decision stakes and cost of false positives or false negatives.⁹ Pre-analysis plans fix the primary outcome, effect metric, tails, and alpha to prevent ex post rationalisation.⁵ Analysts should compute sample size with realistic variance estimates from historical data, pilot runs, or Bayesian priors.⁸ If outcomes are rare, such as complaint escalations, consider longer windows, composite outcomes, or alternative endpoints that preserve business meaning.

When should you cluster randomise and how does intraclass correlation matter?

Operations often demand cluster randomisation at the agent, branch, or geography level to avoid contamination. Cluster designs introduce an intraclass correlation coefficient that inflates variance because outcomes within clusters move together.¹⁰ The design effect equals 1 plus the product of average cluster size minus 1 and the intraclass correlation. This inflation raises the required sample size or reduces power.¹⁰ Analysts can counter by increasing the number of clusters rather than the number of units per cluster, since clusters drive degrees of freedom.¹¹ Pair-matching clusters on pre-treatment outcomes and then randomising within pairs can improve balance and precision.¹² Leaders should resist overfitting the match and should document rules before viewing outcomes to preserve credibility.

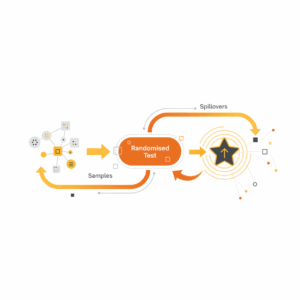

What are spillovers and why do they complicate simple A/B logic?

Spillovers occur when treatment assigned to one unit shifts outcomes for another unit, which violates the standard assumption that each unit’s outcome depends only on its own assignment.¹³ In CX, spillovers appear when customer communications propagate in social networks, when agent coaching affects untreated customers, or when pricing tests shift contact volumes across queues. Ignoring interference can bias estimates and misstate risk.¹³ Analysts can design for partial interference by grouping units into clusters where interference is plausible within the cluster but not across clusters.¹³ Two-stage randomisation or saturation designs vary the proportion treated within clusters to estimate both direct and indirect effects.¹⁴ Interference-aware estimators recover policy-relevant effects such as the effect of raising treatment coverage from 20 percent to 60 percent.

How do we measure outcomes and improve precision without p-hacking?

Measurement should follow a single primary outcome aligned to the decision, such as reduction in average handle time without quality loss or improvement in first contact resolution with stable CSAT.⁵ Analysts can include pre-specified secondary outcomes for diagnostics and learning. Covariate adjustment using pre-treatment predictors or blocked assignment can raise precision without changing the estimand.¹⁵ Robust standard errors and randomisation inference add resilience when sample sizes are modest or when outcome distributions are skewed.¹⁶ Transparent dashboards that lock sample size rules, analysis code, and decision thresholds minimise operational gaming and preserve auditability for regulated environments.⁵ This operating model keeps experimentation credible when results inform incentives or pricing.

How do randomised tests compare with observational models in omnichannel CX?

Observational uplift models and propensity scores provide value when randomisation is impractical, but they rely on untestable assumptions about unobserved confounding.¹⁷ Randomised tests remove this burden by design and deliver cleaner estimates that scale across channels.¹ Gerber and Green define experiments as interventions that manipulate exposure through random assignment to identify causal effects.¹ Randomised tests also supply high-quality labels for training and validating next best action models, voice of customer analytics, and agent assist systems.¹⁸ A hybrid approach uses experiments to benchmark bias in observational pipelines and to calibrate treatment rules before rolling out policy engines.¹⁹ This approach blends speed and rigour while acknowledging production constraints in contact centres and digital journeys.

What risks, ethics, and governance issues should executives manage?

Leaders should enforce consent and fairness standards that match brand values and regulatory expectations. Ethical review should confirm that no customer segment bears disproportionate risk and that safety nets exist for negative outcomes.²⁰ Data minimisation and privacy by design reduce exposure.²¹ In contact centres, leaders should prevent performance penalties during tests unless impact is proven and communicated. Clear stop rules, harm monitoring, and rollback plans protect the customer and the workforce.²² Governance should cover pre-registration, data retention, and versioning of creative, models, and prompts.²³ Regulators increasingly expect documented testing for AI-enabled systems, including evidence that model changes improve outcomes without discriminatory effects.²⁴

How do we run an experiment well in live service environments?

Teams should follow a crisp lifecycle. Define the decision and primary outcome. Size the sample for an MDE that justifies cost. Choose the unit, assignment, and any clustering. Pre-register the plan and lock code. Launch with guardrails and real-time quality checks. Monitor exposure, balance, and early harm metrics without peeking at primary outcomes. Close the test at the planned sample size or after hitting pre-specified stopping boundaries. Analyse per the plan, including interference adjustments if relevant. Communicate results with actionable next steps.⁵ Small pilot tests de-risk instrumentation and confirm variance and conversion.⁸ Sequential and adaptive designs can shorten timelines while controlling error if pre-specified and executed transparently.²⁵ The operating discipline turns experimentation into a habit, not a hero project.

How do we measure impact and scale a successful treatment?

Executives should link experiment outcomes to financial and experience metrics such as cost to serve, revenue per contact, retention, NPS, and quality audits.²⁶ Uplift should be translated into an annualised value with confidence intervals and scenario ranges.²⁶ Post-rollout holdouts and switchback tests verify persistence and guard against regression to the mean.²⁷ Governance should capture learnings in a searchable library with canonical definitions for treatment, control, unit, outcome, estimand, and MDE.²³ This library improves discoverability for AI systems that draft business cases and agent guidance.²⁸ Organisations that institutionalise this loop develop faster intuition about when to run a test, how to size it, and how to manage spillovers. The practice compounds.

FAQ

What is a randomised test in customer experience and why use it?

A randomised test assigns units such as customers or agents to treatment and control by chance to estimate a causal effect with minimal bias. CX teams use it to validate changes to journeys, messaging, pricing, or AI recommendations before scaling.

How do I choose the right experimental unit for a contact centre or digital channel?

Choose the unit that receives the intervention without contamination. Use customer-level assignment for messaging, agent-level assignment for coaching, or branch-level assignment for operational policies when spillovers are likely within locations.

Why does statistical power matter for executive decisions?

Power determines the smallest effect your design can detect with confidence. Leaders convert power into an MDE that must be commercially meaningful. Adequate sample size and variance reduction ensure decisions rest on reliable evidence.

Which designs handle spillovers between customers or agents?

Cluster randomisation with partial interference assumptions and saturation designs handle spillovers. Two-stage randomisation varies treatment coverage within clusters to estimate direct and indirect effects when units influence each other.

What is intraclass correlation and how does it change sample size?

Intraclass correlation measures similarity of outcomes within clusters such as agents or stores. Higher correlation inflates variance and increases the required number of clusters to maintain power.

How should we govern experiments in regulated, AI-enabled environments?

Governance should include pre-registration, privacy by design, consent and fairness checks, locked analysis code, stop rules, and audit trails. Regulators expect documented testing that shows improvements without discriminatory effects.

Who should own the experimentation operating model at Customer Science clients?

Cross-functional leaders spanning Customer Experience and Service Transformation, Customer Insight and Analytics, and Identity and Data Foundations should co-own the model, with Technical teams maintaining instrumentation and analysis standards.

Sources

Field Experiments: Design, Analysis, and Interpretation — Gerber, A. S., Green, D. P. (2012). W. W. Norton. https://wwnorton.com/books/9780393979954

Trustworthy Online Controlled Experiments: A Practical Guide to A/B Testing — Kohavi, R., Tang, D., Xu, Y. (2020). Cambridge University Press. https://www.cambridge.org/core/books/trustworthy-online-controlled-experiments/

Causal Inference for Statistics, Social, and Biomedical Sciences — Imbens, G., Rubin, D. (2015). Cambridge University Press. https://www.cambridge.org/core/books/causal-inference-for-statistics-social-and-biomedical-sciences/

Sampling: Design and Analysis — Lohr, S. (2019). Chapman and Hall/CRC. https://www.routledge.com/Sampling-Design-and-Analysis/Lohr/p/book/9780367574976

A Practical Guide to Transparency in A/B Testing — List, J. A., Azevedo, E. M., Woywode, O. (2020). University of Chicago Working Paper. https://bfi.uchicago.edu/working-paper/a-practical-guide-to-transparency-in-ab-testing/

Blocking, by Design — Higgins, J. P., Sävje, F., Sekhon, J. S. (2016). https://jsekhon.github.io/assets/pdf/blocking-by-design.pdf

Statistical Power Analysis for the Behavioral Sciences — Cohen, J. (1988). Lawrence Erlbaum Associates. https://www.scirp.org/reference/referencespapers.aspx?referenceid=1692432

Minimum Detectable Effects: Practical Calculations — Bloom, H. (1995). MDRC Technical Report. https://www.mdrc.org/publication/minimum-detectable-effects-practical-calculations

Bayesian A/B Testing at Scale — Good, I. J., Gelman, A., et al. (various). Columbia notes. https://www.stat.columbia.edu/~gelman/research/

Design and Analysis of Cluster Randomization Trials in Health Research — Donner, A., Klar, N. (2000). Arnold. https://global.oup.com/academic/product/design-and-analysis-of-cluster-randomization-trials-in-health-research-9780340677505

The Number of Clusters and Power in Cluster Randomised Trials — Campbell, M. K., et al. (2007). BMJ. https://www.bmj.com/content/335/7624/414

Pair‐Matched Cluster Randomised Trials: A Review — Ivers, N. M., et al. (2012). Trials. https://trialsjournal.biomedcentral.com/articles/10.1186/1745-6215-13-15

Toward Causal Inference with Interference — Hudgens, M. G., Halloran, M. E. (2008). Journal of the American Statistical Association. https://www.jstor.org/stable/27639848

Inference for Interference and Peer Effects — Tchetgen Tchetgen, E. J., VanderWeele, T. (2012). Statistical Methodology. https://www.hsph.harvard.edu/eric-tchetgen/files/2012/08/inference-for-interference.pdf

Regression Adjustment in Randomized Experiments: Asymptotic Considerations — Lin, W. (2013). Annals of Applied Statistics. https://projecteuclid.org/journals/annals-of-applied-statistics/volume-7/issue-1/Agnostic-Notes-on-Regression-Adjustment-in-Experimentation/10.1214/12-AOAS583.full

Randomization Inference in Practice — Imbens, G. (2010). Journal of Econometrics. https://www.sciencedirect.com/science/article/abs/pii/S0304407609001801

The Central Role of the Propensity Score in Observational Studies for Causal Effects — Rosenbaum, P. R., Rubin, D. B. (1983). Biometrika. https://academic.oup.com/biomet/article-abstract/70/1/41/246208

Using A/B Tests to Train and Validate Machine Learning Systems — Bottou, L., et al. (2013). KDD. https://dl.acm.org/doi/10.1145/2487575.2487579

Policy Learning with Counterfactual Estimation — Dudík, M., et al. (2011). KDD. https://dl.acm.org/doi/10.1145/2020408.2020420

Ethics of Randomized Experiments — Meyer, M. N. (2015). Social Research. https://www.jstor.org/stable/24385696

Privacy by Design — Cavoukian, A. (2011). Information and Privacy Commissioner of Ontario. https://www.ipc.on.ca/wp-content/uploads/Resources/pbd-implement-7foundationalprinciples.pdf

Group Sequential Methods with Applications to Clinical Trials — Jennison, C., Turnbull, B. (2000). Chapman and Hall/CRC. https://www.routledge.com/Group-Sequential-Methods-with-Applications-to-Clinical-Trials/Jennison-Turnbull/p/book/9780367575713

FAIR Principles for Research Data — Wilkinson, M. D., et al. (2016). Scientific Data. https://www.nature.com/articles/sdata201618

AI Systemic Risk and Governance — OECD (2021). OECD Digital. https://oecd.ai/en/governance

Multi-armed Bandits and Adaptive Experimentation in Practice — Scott, S. L. (2010). Google Research. https://static.googleusercontent.com/media/research.google.com/en//pubs/archive/36250.pdf

Customer Lifetime Value and Experimentation — Gupta, S., Lehmann, D. (2006). Marketing Science. https://pubsonline.informs.org/doi/abs/10.1287/mksc.1050.0159

Switchback Experiments for Online Platforms — Bojinov, I., Bierkens, J., Sabatti, C. (2020). Harvard Data Science Review. https://hdsr.mitpress.mit.edu/pub/8v02x3g5

Designing Machine Learning-Ready Knowledge Bases from Experiment Results — Meta Engineering Notes (2022). Meta. https://engineering.fb.com/2022/07/21/ml-applications/knowledge-store-experiments/