What problem does a CX maturity assessment actually solve?

Leaders need a clear, credible way to locate their organisation on the path from ad hoc efforts to a disciplined, value-producing customer experience system. A CX maturity assessment translates scattered initiatives into a shared map of capabilities, gaps, and next steps. It shows whether your teams can reliably convert customer insight into journey improvements that lift revenue and reduce cost to serve. Research links better experiences to stronger retention and share of wallet, which means maturity is not a vanity score but a predictor of financial outcomes.¹ ² An assessment also aligns stakeholders around a small set of outcome metrics such as First Contact Resolution and repeat contacts so improvement efforts stay honest.³

What is CX maturity in plain terms?

CX maturity describes how consistently your organisation can design, deliver, and improve journeys that customers complete successfully. Mature programs connect strategy to value, manage journeys as products, measure outcomes beyond satisfaction, and embed governance so quality does not drift. Industry models differ in labels, but they share a path from reactive fixes to proactive, cross-functional management and continuous improvement. Forrester’s and Gartner’s frameworks both emphasise strategy, insight, design, delivery, and measurement as the backbone, with governance tying it together.⁴ ⁵ The destination is repeatable mechanisms that reduce customer effort and produce measurable gains in loyalty and cost to serve.¹ ²

How do you structure a practical maturity model?

Use six domains that map to how work actually gets done:

-

Strategy and Value Linkage. Outcomes and value trees are defined and funded.

-

Journey Management and Design. Priority journeys have owners, blueprints, and next-state designs customers can complete.

-

Insight and Knowledge. Voice-of-customer, operational data, and knowledge management work as one system.

-

Delivery and Orchestration. Cross-functional teams ship small changes weekly across channels and policies.

-

Governance and Risk. Standards, roles, and privacy controls are explicit and auditable.

-

Measurement and ROI. Leading signals pair with lagging outcomes that the board accepts.

Score each domain on a 0–4 scale: 0 Absent, 1 Ad hoc, 2 Emerging, 3 Repeatable, 4 Reliable. Publish one strength, one gap, and one action per domain to keep the assessment tight and actionable.⁴ ⁵

Which outcomes prove maturity beyond a survey score?

Anchor on three outcome threads. First Contact Resolution (FCR) shows that assisted interactions resolve the first time.³ Repeat-within-seven-days on the same issue reveals whether journeys actually removed effort. Cost per resolved contact ties effort and process quality to economics. Pair these with a design-friendly lead indicator such as time to first useful step so teams can steer improvements weekly. Google’s HEART framework is a pragmatic way to link goals to signals and metrics so dashboards guide decisions rather than celebrate activity.⁶

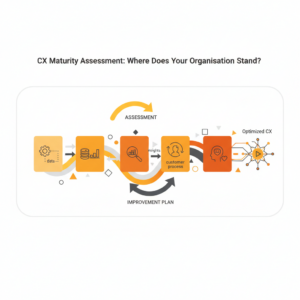

How do you run the assessment step by step?

Step 1 — Define scope and value. Pick the top five journeys by volume and value. Map each to business outcomes using a value tree that links abandonment, FCR, and conversion to revenue and cost.¹

Step 2 — Gather evidence. For each domain, collect artifacts: strategy docs, journey maps and service blueprints, VoC samples, knowledge articles, change logs, QA forms, privacy controls, and KPI packs. Service blueprinting is essential because it exposes backstage policies and systems that shape what customers feel.⁷

Step 3 — Score and calibrate. Score each domain 0–4 with examples. Calibrate scores across a cross-functional group to avoid optimism bias.

Step 4 — Prioritise actions. Select two big rocks per domain that move outcomes within a quarter. Keep actions small enough to ship.

Step 5 — Report and fund. Present a one-page summary that shows current level, target level per domain, and the expected movement in completion, FCR, repeats, and cost per resolved contact. Use low/base/high ranges to price uncertainty.¹

What does “good” look like in each domain?

Strategy and Value Linkage. Leaders publish two to four outcome targets and their economic links. McKinsey’s work shows that explicit CX-to-value chains accelerate decisions and protect funding.¹

Journey Management and Design. Priority journeys have named owners, current and next-state maps, and service blueprints. Designs are task-first and stateful because users scan and decide quickly.⁸

Insight and Knowledge. Voice-of-customer, operational data, and complaints feed into a maintained knowledge base with short, scannable, current articles. Knowledge-Centered Service defines roles and lifecycle so guidance stays accurate.⁹

Delivery and Orchestration. Squads ship weekly, instrument changes, and trigger event-based messages that stop when the job completes, which reduces “just checking” demand.¹⁰

Governance and Risk. Contact centre standards require accurate, current information and consistent outcomes; privacy programs enforce informed, specific, current, and voluntary consent.¹¹ ¹²

Measurement and ROI. HEART ties goals to signals; FCR, repeats, and cost per resolved contact prove value.³ ⁶

How do you score journeys without politics?

Score each journey on three axes: frequency, pain, and value. Frequency comes from traffic and demand. Pain comes from VoC themes and complaint rates. Value comes from the journey’s link to churn, revenue, or cost. Plot the journeys and select two to four for the next quarter. Express the benefits with conservative ranges and a confidence factor so finance sees risk priced in, not hidden.¹

What are the most common maturity gaps?

No value linkage. Teams measure satisfaction but cannot connect changes to revenue or cost. Fix by adopting a value tree and reporting FCR, repeats, and cost per resolved contact.¹ ³

Design without delivery muscle. Journey maps exist without owners or backlogs. Fix by assigning a journey owner and a cross-functional squad with a weekly release cadence.⁷

Stale or verbose knowledge. Agents cannot find trusted steps, so handle-time variance and repeats rise. Fix with KCS: short, searchable, outcome-first content with ownership and a 90-day touch rule.⁹

Privacy last. Consent and purpose are not instrumented, which stalls automation. Fix by aligning to the Australian Privacy Principles and logging consent at collection and at use.¹²

Vanity metrics. Dashboards celebrate entrances or clicks. Fix with HEART and lagging outcomes that the board recognises.⁶

What actions move maturity in 90 days?

Days 1–30: Baseline and decide.

Select two journeys. Establish baselines for completion, FCR, repeats, and cost per resolved contact. Score the six domains. Publish a one-pager with target deltas and owners.¹ ³

Days 31–60: Fix clarity and ownership.

Rewrite the top ten knowledge items for the chosen journeys to be task-first and scannable. Assign a journey owner, create a backlog, and stand up a weekly change cadence. NN/g shows that front-loaded, scannable content improves task success.⁸ ⁹

Days 61–90: Orchestrate and prove.

Add event-triggered status with hold-until to stop post-completion nudges. Ship two small policy or system fixes from the blueprint. Report lead movement in time to first useful step, then lagging movement in completion, FCR, repeats, and cost per resolved contact.¹⁰ ³

How should you govern CX so maturity sticks?

Install three lightweight rituals. Weekly calibration aligns quality judgments and reinforces knowledge accuracy, consistent with ISO expectations.¹¹ Monthly journey review inspects outcomes, changes shipped, and next bets. Quarterly maturity check rescored by domain keeps investment pointed at the binding constraint. Governance is not bureaucracy; it is a rhythm that protects accuracy, privacy, and speed so progress compounds.

How do AI and automation affect maturity?

AI accelerates mature practices and exposes weak ones. Retrieval-augmented assistants help agents and customers reach the first useful step faster by drafting answers grounded in approved sources with citations. This reduces hallucination risk and creates auditability.¹³ Event-driven orchestration reduces avoidable demand by messaging only on real state changes.¹⁰ Treat these as amplifiers to a clear strategy and robust knowledge. Measure their impact with HEART signals and lagging outcomes so investment decisions stay grounded.³ ⁶

FAQ

What is a CX maturity assessment in one sentence?

It is a structured evaluation across strategy, journeys, insight, delivery, governance, and measurement that identifies gaps and actions tied to outcomes like FCR, repeats, and cost per resolved contact.¹ ³

Which metrics best prove CX maturity to the board?

Show First Contact Resolution, repeat-within-seven-days, completion rate, and cost per resolved contact, paired with a HEART map so each metric has a decision owner and target.³ ⁶

How many maturity levels should we use?

Four are enough: Absent, Ad hoc, Emerging, Repeatable, Reliable. Fewer levels reduce debate and push the team to name actions, not argue labels.⁴ ⁵

How do we keep the assessment from becoming shelfware?

Publish one strength, one gap, and one action per domain. Assign owners. Review weekly in the journey forum and quarterly across domains. Ship small changes and report outcome deltas.

Where should we start if we can do only one thing this quarter?

Pick one high-volume journey, assign an owner, rewrite the top knowledge tasks to be short and scannable, and add event-triggered status. Track FCR and repeats to prove value.³ ⁸ ¹⁰

How do privacy obligations affect maturity in Australia?

Instrument informed, specific, current, and voluntary consent and log purpose at collection and use. These APP controls unlock responsible automation and reduce approval friction.¹²

Does AI raise our maturity score automatically?

No. AI helps only when grounded in approved knowledge, measured against completion and FCR, and governed for privacy and safety. Otherwise it adds risk and rework.¹³

Sources

-

Linking the Customer Experience to Value — Joel Maynes, Alex Rawson, Ewan Duncan, Kevin Neher, 2018, McKinsey & Company. https://www.mckinsey.com/capabilities/growth-marketing-and-sales/our-insights/linking-the-customer-experience-to-value

-

The Value of Customer Experience, Quantified — Peter Kriss, 2014, Harvard Business Review. https://hbr.org/2014/08/the-value-of-customer-experience-quantified

-

First Contact Resolution: Definition and Approach — ICMI, 2008, ICMI Resource. https://www.icmi.com/files/ICMI/members/ccmr/ccmr2008/ccmr03/SI00026.pdf

-

The Customer Experience Maturity Model — Forrester Research, 2020, Forrester (overview/Playbook). https://www.forrester.com/report/the-customer-experience-maturity-playbook/RES137426

-

Gartner Customer Experience Maturity Model (Overview) — Gartner, 2023, Research note/Guide. https://www.gartner.com/en/insights/customer-experience

-

Measuring the User Experience at Scale: The HEART Framework — Kerry Rodden, Hilary Hutchinson, Xin Fu, 2010, Google Research Note. https://research.google/pubs/pub36299/

-

Service Blueprinting: A Practical Technique for Service Innovation — Mary Jo Bitner, Amy L. Ostrom, Felicia N. Morgan, 2008, California Management Review. https://cmr.berkeley.edu/2008/12/service-blueprinting/

-

How Users Read on the Web — Jakob Nielsen, 2008 update, Nielsen Norman Group. https://www.nngroup.com/articles/how-users-read-on-the-web/

-

KCS Practices Guide — Consortium for Service Innovation, 2020, CSI. https://www.serviceinnovation.org/kcs-resources

-

Event-Triggered Journeys: Hold-Until and Experiments — Twilio Segment Docs, 2024, Twilio. https://www.twilio.com/docs/segment/engage/journeys/v2/event-triggered-journeys-steps

-

ISO 18295 — Customer Contact Centres (Parts 1 & 2) — International Organization for Standardization, 2017, ISO. https://www.iso.org/standard/63167.html

-

Australian Privacy Principles — Office of the Australian Information Commissioner, 2023, OAIC. https://www.oaic.gov.au/privacy/australian-privacy-principles

-

Retrieval-Augmented Generation for Knowledge-Intensive NLP — Patrick Lewis, Ethan Perez, Aleksandra Piktus, et al., 2020, NeurIPS. https://proceedings.neurips.cc/paper_files/paper/2020/hash/6b493230205f780e1bc26945df7481e5-Abstract.html

FAQ